The literature that came before the intended study is covered in this section. Clinical data processing and current medical diagnostics have benefited from AI computational dependability. Human vision fails where AI succeeds.

Nowadays, a lot of systems used in the medical research sector incorporate a variety of machine learning approaches for data processing and new discoveries. The analysis of a number of recent studies in the field of healthcare has been done using machine learning techniques. In numerous prior studies, researchers have leveraged machine learning techniques to predict and forecast outcomes associated with strokes. The results of various scholars in this field are displayed below.

By Minhaz et al.8, information on strokes was gathered from many hospitals in Bangladesh. The data is cleaned and prepared before being used in the training phase, where 10 different algorithms are used. We then employ a weighted voting classifier for all classifiers to raise the bar. The best model for classification is then selected; next, only that model is optimized after each succeeding model has been optimized using a weighted vote classifier. The study’s findings indicate that the weighted voting classifier can achieve an accuracy of up to 97%.

The ability to recognize a stroke using biometric signals from an Electroencephalogram (EEG) recorded while a person is walking was demonstrated in research by Yoon-A et al.9. According to the study’s authors, random forest can efficiently detect strokes using biometric information. In order to predict strokes, Priya et al.10 use machine learning methods and text-mining tools. They use 14 different techniques to classify the data, including a complex tree, a simple tree, a medium tree, a linear SVM, a quadratic SVM, an ANN, and logistic regression. According to experimental results, ANN has a higher accuracy than other methods, at 95.3%.

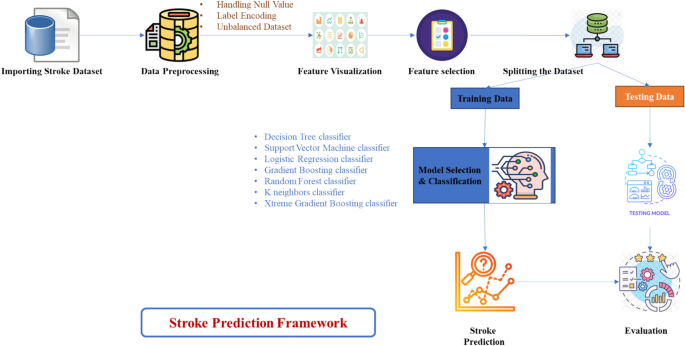

Several Kaggle datasets were used in data preprocessing by Sailasya et al.11, which addressed problems like handling missing values, label encoding, and imbalanced data. This dataset is cleaned and prepared before being analyzed using six distinct machine learning methods. Comparing the group’s accuracy to other algorithms, the Nave Bayes classification has the greatest rate (82%). In order for users to determine whether they have had a stroke on their own, they have created an HTML webpage where they can enter information. To predict strokes, Hager et al.12 used four classification methods: logistic regression, support vector machine (SVM), random forest, and decision tree. In order to achieve this result, machine learning algorithms utilized hyperparameter tuning and cross-validation. In a comparison of the results of these four models, random forest came out on top with a 90% accuracy rate.

Using asymmetric data, Wu et al.13 constructed a model to predict To balance the unbalanced data that they had obtained from a Chinese longitudinal study of Healthy Longevity, the researchers in this study used RUS, ROS, and SMOTE techniques. The authors of this study used regularized random forest, Support Vector Machine, and logistic regression for predicting strokes in both balanced and imbalanced datasets, contrasting the best results from each model to those from the individual datasets. They found that the accuracy of RLR and SVM had the highest (95%) but the lowest sensitivity for the unbalanced dataset (0.1).

An investigation conducted by Badriyah et al.14 gathered data from CT scans of stroke patients in Surabaya, Indonesia. Images are pre-processed using methods like cropping, grayscale, data conversion, data augmentation, and scaling with the goal of improving the image quality. Using image data is another aspect of feature extraction. The efficacy of eight algorithms used for machine learning is then evaluated, including logistic regression, Naive Bayes, random forest, and decision tree. By achieving a 95.97% accuracy rate, this experiment demonstrates that random forest outperforms all other classifiers. For the purpose of predicting stroke risk, Jaehak et al.15 studied real-time biosignals combined with artificial intelligence. With both the Long Short-Term Memory (deep learning) and random forest algorithms (machine learning) being used, Long Short-Term Memory (LSTM) attains the highest accuracy in this configuration. With the help of EEG data and more deep learning models, Yoon-A et al.16 have published another study in which they found that LSTM has the highest accuracy (94%), producing the best results.

An algorithm for stroke prediction has been developed by Singh et al.17 and compared to a variety of other methods on the dataset “Cardiovascular Health Study (CHS)”. To collect features, a decision tree was used, dimensions were reduced using a PCA method, and the classification model was constructed using a backpropagation neural network algorithm. After analyzing and merging classification efficiencies using several approaches and heterogeneity models, based on the analysis, 97.7% accuracy was found in the prediction of stroke disease. A hybrid machine learning method has been developed by Liu et al.18 for cerebral stroke prognosis prediction according to class imbalance measurements and limited physiological evidence. In the two steps of the procedure were used to represent how it changed over time. Data that were missing were filled in using random forest regression prior to categorization. Second, on an uneven dataset, stroke predictions were made using a DNN-based automatic hyperparameter optimization (AutoHPO). There are 43,400 patient records in the medical dataset, and 783 of them are stroke instances. The false negative rate for this prediction strategy was 19.1%, which is lower than the average for more conventional techniques by about 51.5%. A 33.1% false positive rate, a 71.6% accuracy, and a 66.4% sensitivity were estimated for the suggested technique.

According to Asadi et al.19, a thorough review of patient files was carried out to determine whether endovascular therapy was beneficial for acute ischemic stroke. Using SPSS, MATLAB, and Rapidminer, respectively, conventional statistical analysis and ANN analysis was performed. A supervised method has been developed for identifying good and bad predictors using support vector machine algorithms. These algorithms were taught, tested, and ranked using randomly divided data. For acute anterior circulation ischemic stroke, endovascular therapy was performed on 107 patients. A total of 66 males were present, with an average age of 65. The models took into account every conceivable clinical, economic, and practical factor. In the resulting confusion matrix, the target and output groups of the neural network were approximately 80% congruent, and receiving operative properties were favorable. The performance of the assist vector machine was enhanced through optimization, reaching a respectable 2.064 root mean square error. Conducting recurrent studies on people who had an acute ischemic stroke suggested by Heo et al.20. After three months, an outcome was considered successful if the adjusted Rankin Scale score was 0–1 or 2. Random forest, logistic regression, and DNN were all developed and contrasted as the three prediction techniques.

The Bayesian Rule was described by Letham et al.21, which generates a posterior distribution across possible choices. The results showed that predictions made using Bayesian Rule Lists were generally accurate. As a result of recent advances in precision medicine, the approach could provide exceptionally accurate and understandable patient (CHADS2 score) scoring systems, putting it on par with the most sophisticated machine learning prediction algorithms. A number of techniques based on machine learning were employed by Emon et al.8 to categorize stroke patients, including Segmental Gradient Descent, logistic regression, KNN, decision trees, XGBoost, gradient boosting, and AdaBoost. The weighted voting classifier in that study had a 97% accuracy rate. Studies were conducted by Amini et al. to forecast strokes22. They identified 50 risk factors for alcohol use, smoking, hyperlipidemia, diabetes, stroke, and other conditions among 807 healthy and ill individuals. The k-nearest neighbor algorithm and a decision tree algorithm with a c4.5 tree structure were employed; these algorithms have accuracy rates of 94% and 95%, respectively. In relation to brain strokes, Sirsat et al.23 investigate the use of machine learning. The authors comprehensively review existing literature to identify trends, methodologies, and key findings in the utilization of machine learning techniques for stroke prediction, detection, and diagnosis. Through a thorough examination of relevant articles, the review aims to provide insights into the current state of research, addressing the advantages and disadvantages of various methods. This survey provides an invaluable reference for researchers and practitioners within the discipline, offering a consolidated overview of advancements, challenges, and potential areas for future contributions in leveraging machine learning for addressing issues related to brain strokes. According to Cheng et al.24, an ischemic stroke’s prognosis can be estimated. Two ANN models were used in the study; data sets from 82 ischemic stroke patients were analyzed, and 95% and 79% accuracy percentages were obtained, respectively. An investigation by Cheon et al.25 examined whether stroke patients could be predicted to die. The occurrence of strokes was calculated in their study based on 15,099 participants. In order to identify strokes, they used deep neural network methods.

A PCA model was utilized by the authors to extract data from stroke medical records and predict stroke incidence. In their case, the area under the curve is 83%. A method of lengthening. Their CNN approach had an accuracy rate of 90%. The Sung et al.26 study led to the development of a stroke severity index. Chin et al.’s27 study examined the effectiveness of an automated technique for early ischemic stroke detection. Using convolutional neural networks (CNNs), they developed a method to automate primary ischemic stroke. The researcher gathered 256 images in order to create and test the CNN model. They used the information gathered on 3577 victims of acute ischemic stroke to expand the picture that was gathered for their system’s image preprocessing. In addition to linear regression, they used a number of data mining techniques to build their predictive models. Based on 95% confidence intervals, as a result, they were more accurate than the K-nearest neighbor algorithm.

Machine learning was used to predict functional outcomes after an ischemic stroke by Monteiro et al.28. A patient who passed away three months after being admitted was used as a test case for this procedure. The AUC value of their study was higher than 90. The study conducted by Kansadub et al. aimed to determine the risk of stroke. To analyze the data and forecast strokes, the study’s authors employed neural networks, Naive Bayes, neural networks, and decision trees. In their investigation, the authors examined the AUC and accuracy of their pointer. Their results indicated that the most accurate algorithm was naive Bayes, which was classified as a decision tree. The research article by Kansadub et al.29 focuses on developing a stroke risk prediction model by employing demographic information. The authors conducted a literature survey to explore existing studies and methodologies related to stroke risk prediction. They likely reviewed relevant publications that pertain to biomedical engineering, epidemiology, and machine learning in order to understand the current landscape of stroke prediction models. The survey may have covered aspects such as data sources, demographic variables, modeling techniques, and evaluation metrics used in previous works. By synthesizing information from the literature, the authors aimed to identify gaps, challenges, and opportunities in the existing approaches, providing a foundation for their own research. Demographic data is used in creating a stroke risk prediction model. Research by Adam et al.30 was conducted to determine the ischemia stroke classification. To categorize ischemic strokes, the researchers used decision trees and the k-nearest neighbor method. According to healthcare providers, decision trees are more effective at classifying strokes.

In a study by Jafar et al.31, our method, which enhanced the model’s performance and decreased overfitting, identified the top 10 most crucial features by combining the LASSO FS and the HyperOpt optimization of the GB model. As a result, the model was easier to understand and heart disease risk assessment and early detection were enhanced. An automated system for predicting HypGB heart disease was presented in this work. The system outperformed all other existing machine learning models, achieving 97.32% accuracy on the Cleveland heart disease dataset and 97.72% accuracy on the Kaggle heart failure dataset.

Mariano et al.32 introduced an efficient and rapid method for generating extensive datasets tailored for machine learning applications in the classification of brain strokes using microwave imaging equipment. Their suggested method relies on the linearization of the scattering operator and the distorted Born approximation, which speeds up the process of creating large training datasets that are necessary for machine learning algorithms. This approach aims to minimize the effort required in creating the dataset. Each classifier within the system possesses the capability to determine the occurrence of a stroke, classify its type (ischemic or hemorrhagic), and pinpoint its precise location in the brain. The effectiveness of the trained procedures was assessed using data sets that were produced by full-wave simulations of the entire system.

A recent exploration by srinivas et al.33, the suggested soft vote classifier uses 3 base classifiers: random forest, Extremely Randomized Trees and HistogramBased gradient boosting. The gradient boosting algorithm in machine learning has a variation known as Histogram-Based gradient boosting (HBGB), which substitutes the conventional method of using a single decision tree with histograms to approximate the underlying distribution of the data. It works especially well with datasets that have a large of features. With the use of a soft voting classifier the F1 overall score is 97%,accuracy achieved with the model is 96.88%.

Maheswara rao et al.34 suggest that by utilizing specific features, the suggested ML-IHDAS creates classification models utilizing Support Vector Machine (SVM), random forest (RF), gradient boosted decision trees (XGBoost), and logistic regression (LR). According to the experimental study, XGBoost used minimal features to achieve high accuracy, while MRMR performed better for training machine learning models than Kruskal, Chi2, and ANOVA. MRMR performs better than other methods for selecting features for training all deployed machine learning models, and it improves the effectiveness of the XGBoost approach.

A comparison table of all the associated work is provided in Table 1 above. For the vast majority of investigations, an accuracy rate of 90% was thought to be favorable. The unique aspect of our research is the use of a number of well-known machine learning techniques to achieve our desired results. With corresponding F1-scores of 94, 87, 96, and 91%, random forest (RF), decision tree (DT), logistic regression (LR), and voting classifier (VC) were the most effective methods. It demonstrates the reliability of the models used in this study by having a significantly higher accuracy percentage than earlier studies. They have been shown to be reliable in several model comparisons, and the analysis results may be used to create the scheme.

It is clear from this section that several machine learning techniques, text-mining tools, and biometric signals are used to achieve an accuracy level that is unparalleled. As a result, the outcomes of all methods and approaches are inconsistent, which is actually perplexing. Due to this, a simple method based on machine learning is employed here, which has been capable of produce the high accuracy rate in comparison to the earlier work. To further enhance the accuracy of our accuracy prediction model, we additionally used feature selection algorithms to extract some of the most essential features that contribute to the accuracy of the model.