Advanced machine learning models called Graph Neural Networks (GNNs) process and analyze graph-structured data. These models have proven to be very effective in a variety of applications, including recommendation systems, question answering, and chemical modeling. Transductive node classification is a typical problem for GNNs, where the goal is to predict the label of a particular node in a graph based on the known labels of other nodes. This method is very useful in areas such as social network analysis, e-commerce, and document classification.

Graph Convolutional Networks (GCN) and Graph Attention Networks (GAT) are two types of GNNs that have shown good effectiveness in transductive node classification. However, the high computational cost of GNNs poses a major obstacle to their adoption, especially when dealing with large-scale graphs such as social networks and the World Wide Web, which may have billions of nodes.

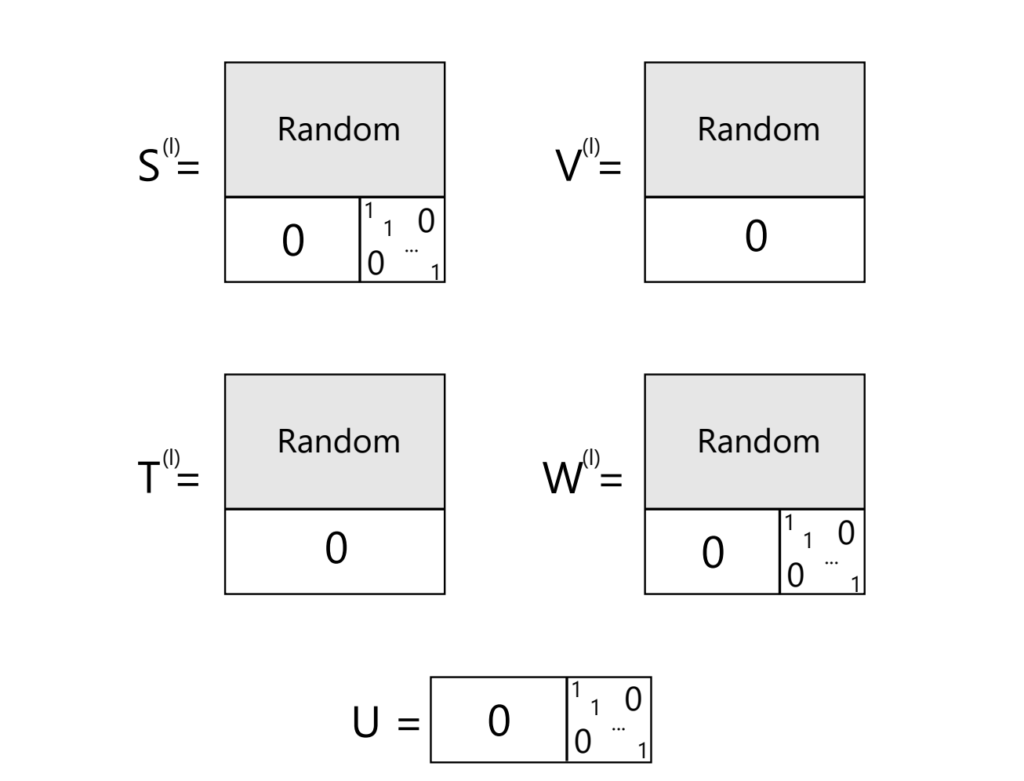

To overcome this, researchers have developed techniques to speed up GNN computation, but they all have various limitations, such as requiring many training iterations and large amounts of processing power. The idea of training-free graph neural networks (TFGNN) was presented as a solution to these problems. During transductive node classification, TFGNN uses the concept of “label as feature” (LaF) to utilize node labels as features. This technique allows GNNs to generate more informative node embeddings than those based on node properties alone, by using label information from nearby nodes.

The concept of TFGNN allows a model to fundamentally perform well without a traditional training step. In contrast to traditional GNNs, which usually require a lot of training to perform at their best, TFGNNs can start working immediately after initialization and only require training when necessary.

Experimental studies strongly support the effectiveness of TFGNN. When tested in a no-training environment, TFGNN consistently outperforms traditional GNNs that require extensive training to achieve comparable results. Compared to traditional models, TFGNN converges significantly faster and, when using optional training, requires significantly fewer iterations to achieve optimal performance. Its efficiency and versatility make TFGNN a highly attractive solution for a variety of graph-based applications, especially in situations where rapid deployment and low computational resources are critical.

The team summarises their main contributions as follows:

- The use of “labels as features” (LaF), a method that has not been thoroughly studied but has great advantages, is discussed in this transductive learning study.

- In this work, we formally demonstrate how LaF can significantly improve the expressive power of GNNs, enhancing their ability to represent complex relationships in graph data.

- This study introduced training-free graph neural networks (TFGNNs) as a transformative approach that can work well without extensive training.

- Experimental results demonstrate the efficiency of TFGNN and confirm that it outperforms current GNN models in a no-training environment.

Check it out Papers and GitHub. All credit for this research goes to the researchers of this project. Also, don't forget to follow us. Twitter And our Telegram Channel and LinkedIn GroupsUp. If you like our work, you will love our Newsletter..

Join us! 49k+ ML Subreddits

Check out our upcoming AI webinars here

Tanya Malhotra is a final year undergraduate student from the University of Petroleum and Energy Studies, Dehradun, doing a BTech in Computer Science Engineering with specialisation in Artificial Intelligence and Machine Learning.

She is an avid Data Science fan and has strong analytical and critical thinking skills with a keen interest in learning new skills, group leadership and managing organizational work.

🐝 Join the fastest growing AI research newsletter, read by researchers from Google + NVIDIA + Meta + Stanford + MIT + Microsoft & more…