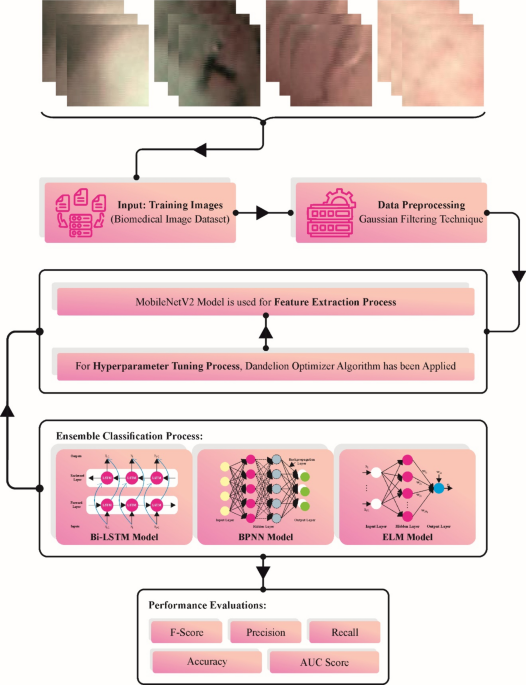

This section introduces an automated LCD-DOAEL method for biomedical throat region images. The LCD-DOAEL method aims to investigate the throat region images for the presence of laryngeal cancer. To accomplish this, the LCD-DOAEL technique comprises different sub-processes, such as GF-based preprocessing, MobileNetv2-based feature extractor, DOA-based hyperparameter tuning, and ensemble learning process. Figure 1 depicts the entire procedure of the LCD-DOAEL technique.

Overall process of LCD-DOAEL technique.

Image preprocessing

Initially, the LCD-DOAEL technique takes place, and the GF approach is applied to eliminate the noise in the biomedical images. GF is a popular image-processing algorithm intended to blur or smooth an image25. The underlying idea behind GF is to convolute the image with Gaussian kernels, a 2D distribution considered by a bell-shaped curve. The convolutional function includes sliding the Gaussian kernel over each image pixel, and the neighbouring pixels contribute to the weighted average for each position according to the Gaussian distribution. The GF is highly efficient in reducing high-frequency noise in the image while preserving edges and details. The extent of blurring or smoothing is controlled by the variance of Gaussian kernels—a significant variance leads to the smooth, broad bell curve, resulting in substantial blurring.

MobileNetv2 feature extractor

The MobileNetv2 model can be employed for the feature extraction process. CNN is the foundation of innovative image classification technology. It is a kind of NN model where convolution is applied for a single layer rather than matrix multiplication26. In contradiction to NN, which treats all the components of source images as input, convolution considers neighbourhood pixels, considerably improving the network efficiency. MobileNet is based on a simplified design that constructs lightweight DTL using a depthwise separable convolutional layer. The basis of MobileNet architecture is a convolution feature map that considerably affects the typical convolutional layer into a depthwise convolution (DWC) and an 11-convolution called a pointwise convolution (PWC). The DWC for MobileNet exploits one filter for all the network interfaces. Then, the outcome of the DWC is combined with the PWC using 11 convolutions. Typical convolution integrates input into new output series in a single iteration while filtering the input. The DWC can classify this into two layers, one for integration and another for filtration. This leads to a considerable reduction in framework and computation size. Figure 2 demonstrates the framework of MobileNetv2.

Architecture of MobileNetv2.

As a third version of MobileNet, MobileNetV2 is a highly efficient and lightweight DL method that satisfies the requirements of peripheral computing systems and mobile devices and excels in resource-constraint environments. MobileNetV2 is intended to have a balance between model size and computation efficacy. Based on integrating the “bottleneck” layer, this model primarily consists of depthwise separable convolution. This layer significantly decreases computation difficulty and model parameters, optimizing the accuracy while retaining model capacity. MobileNetV2 is also defined by the efficient approach of “inverted residuals”. The paradigm strikes a balance between a linear constraint and lightweight expansion, improving the adaptability and efficiency of the model. Furthermore, MobileNetV2 integrates the “squeeze-and-excitation” model that enhances its capability for capturing crucial features by re-sizing the channel-specific feature response. The proposed model concludes with the FC layer having the class size and the ‘LogSoftmax’ activation function for class prediction. The MobileNetV2 is used to provide a visual representation of the structural model. The architecture of MobileNetV2 can accommodate various constraints and applications, which makes it an essential component in DL and CV.

Hyperparameter tuning using DOA mode

In this phase, the hyperparameter selection of MobileNetv2 is performed by the design of the DOA. Heuristics techniques are behaviours from natural procedures. DO is a bio‐inspired optimization method that employs SI to deal with the constant optimization complexity27. DO has been introduced with stimulation from the wind‐blown behaviour of the dandelion plant. Seeds movement in 3 phases: settling, ascending, and descending at a random position in the landing phase. The DO method characterizes these three phases with mathematical representations and searches for optimum solutions by imitating these behaviours. The mathematical stages of the DO technique are detailed below.

-

1.

Initial population: Generate a population initialization randomly.

$$Population=\left[\begin{array}{lll}{D}_{1}^{1}& \dots & {D}_{1}^{Dim}\\ \vdots & \ddots & \vdots \\ {D}_{pop}^{1}& \dots & {D}_{pop}^{Dim}\end{array}\right]$$

(1)

Now, pop refers to the population dimension and \(Dim\) the dimension of the variable. Every candidate solution will be arbitrarily generated among the lower limit (\({L}_{B}\)) and upper limit (\({U}_{B}\)) of the specified problem. The symbol “rand” shows a function which values have been arbitrarily allocated among \([\text{0,1}]\). The \({i}^{th}\) individual \({D}_{i}\) can be stated as follows.

$${D}_{i}=rand\times \left({U}_{B}-{L}_{B}\right)+{L}_{B}$$

(2)

-

2.

Evaluation of fitness values: Improved fitness values for the problems in every individual will be computed. The individual with the best fitness values can be measured as the best. The initial best candidate solution is precisely given by:

$${D}_{elite}=D\left(find\left({f}_{best}=f\left({D}_{i}\right)\right)\right)$$

(3)

-

3.

Ascension stage: The original places of individuals have been calculated and elevated by employing the FF values. With the impact of parameters like air humidity, wind speed, and chamomile seeds, increase to various heights. Now, the weather can be separated into two conditions.

Case-1: On a pure day, wind speeds are considered lognormal distribution \(\text{ln }Y\sim N\left(\mu , {\sigma }^{2}\right)\). The original location of the seeds can be measured as specified in Eq. (4).

$${D}_{(t+1)}={D}_{t}+\delta \times {v}_{x}\times {v}_{y}\times \text{ ln }Y\times \left({D}_{s}-{D}_{t}\right)$$

(4)

Now, \({D}_{t}\) denotes the location of the dandelion seeds at \({t}^{th}\) iteration. \({D}_{s}\) shows the randomly selected location within the search range at \({t}^{he tth}\) iteration, \({v}_{x}\) and \({v}_{y}\) denote the lift element coefficients because of the distinct hose movement of the dandelion. \(\delta\) denotes the coefficient ranging from zero to tone reduces nonlinearly and tactics zero.

The \(log\) uniform distribution assumed in Eq. (4) is described as \(\mu =0\) and \({\sigma }^{2}=1\) and will be presented by the next Eq. (5)

$$\text{ln }Y=\left\{\begin{array}{l}\frac{1}{y\sqrt{2\pi }}\text{exp}\left[-\frac{1}{2{\sigma }^{2}}{\left(\text{ln }y\right)}^{2}\right] y\ge 0\\ 0 y<0\end{array}\right.$$

(5)

In every iteration method, an adaptive parameter \(\gamma\)’ is employed for controlling the length of the search method over the overall iterations count \(T\). During the DO technique, the \(y\) value was selected in the range \([\text{0,1}]\) with uniform distribution, and \(\gamma\) can be described as:

$$\gamma =rand*\left(\frac{1}{{T}^{2}}{t}^{2}-\frac{2}{T}t+1\right)$$

(6)

Case 2: In a day noticeable by rainfall, the increase of dandelion seeds is prevented by humidity, air resistance, and level of alternative parameters. Accordingly, these seeds stay near their new position, and behaviour must be accurately determined by applying a mathematical Eq. (7):

$${D}_{(t+1)}={D}_{t}\times \left( 1-rand \times p\right)$$

(7)

\(p\) becomes a parameter employed for regulating the local search region of dandelion and measured as specified in Eq. (8). This value will be updated at every iteration dependent upon the highest iteration and the number of iterations accessible.

$$p=\left(\frac{{t}^{2}-2t+1}{{T}^{2}-2T+1}+1\right)$$

(8)

Now, \(T\) denotes the maximal number of iterations, and \(t\) signifies the no. of iterations obtainable.

-

4.

Descent phase: Individuals drop to the height measured at the Ascension stage, and their location will be upgraded.

$${D}_{t+1}={D}_{t}-\alpha \times {\beta }_{t}\times \left({D}_{mea{n}_{-}t}-\alpha \times {\beta }_{t}\times {D}_{t}\right)$$

(9)

Now,\({D}_{mea\text{n}\_\text{t}}\) refers to the mean location of the population at the \({i}^{th}\) iteration, \(\beta t\) signifies the Brownian movement and random integer from the uniform distribution.

-

5.

Landing location determination: Seeds resolve in a random position due to weather and wind conditions in their novel location. By utilizing the population’s development, the global preeminent solution can be represented by Eq. (10).

$${D}_{t+1}={D}_{elite}+levy\left(\lambda \right)\times \alpha \times \left({D}_{elite}-{D}_{t}\times \sigma \right)$$

(10)

Now, \({D}_{elite}\) is the optimum place for dandelion seed at \({i}^{t}s\) iteration. \(levy\)(\(\lambda\)) symbolizes the operation of Levy flight and is computed by the given Eq. (11)

$$1evy\left(\lambda \right)=s\times \frac{w\times \sigma }{\left|t\right|B1}$$

(11)

where \(B\) refers to randomly described at \([\text{0,2}].\) \(S\) describes a continuous equivalent to 0.01. This will be arbitrarily selected among \(\omega\) and \(t[0,\) 1]. \(\sigma\) denote measured as:

$$\sigma =\left(\frac{\Gamma \left(1+B\right)\times sin\left(\frac{\pi B}{2}\right)}{\Gamma \left(\frac{1+B}{2}\right)\times B\times 2\left(\frac{B+1}{2}\right)}\right)$$

(12)

-

6.

Repopulation: A novel population was formed with the current positions acquired.

-

7.

Stopping conditions: Steps 2–7 have been repeated until the stopping measure is achieved.

-

8.

Best value: The idea with optimum fitness values can be considered the optimum solution.

The DOA method derives an FF to attain better classification accuracy. It defines the positive integer to depict the superior outcome of the candidate solution. Here, the reduction of classifier errors is assumed as the FF.

$$\begin{aligned}fitness\left({x}_{i}\right)&=Classifier \; Error\;Rate\left({x}_{i}\right) \\ &=\frac{No. \; of \; misclassified \; samples}{Total \; No. \;of \;samples}\times 100 \end{aligned}$$

(13)

Ensemble learning

Finally, the classification process utilizes an ensemble of three classifiers: BPNN, regularized ELM, and the BiLSTM model.

BPNN model

BPNN is a computational model developed with the help of simulating the functional mode of neurons28. This can be generally made of output, input, and hidden layers, and its operational mode primarily contains backpropagation (BP) and forward propagation. During forward propagation, the instance of every input factor can be initially inputted into the neurons of the “input layer”, followed by the input sample being transferred to the following neuron over a specific logical correlation, as expressed in Eq. (14), and lastly, the neuron of “output layer”.

$${y}_{j}=f\left({v}_{j}\right)=f\left({\sum }_{i=1}^{n}{w}_{i{j}^{\chi }i}+{b}_{j}\right)$$

(14)

This logical correlation comprises the bias \({b}_{i}\) of the \({k}^{th}\) neurons and weight \({w}_{ij}\) of the \(I\) neurons of \(the k\) layer to \({the j}^{th}\) neurons at the \(k+1\) layer. The obtained instances of the \(k+1\) layer should be handled via the activation function; later, the dataset must be changed to \(the k+2\) layers. At BP, the estimated value, y, acquired by the above-mentioned logical correlation, will related to investigational value \(y\), and the loss function must be achieved. Thereby, the gradient functions of the loss function could be gained. In conclusion, the bias and weight have been continuously converted by employing the gradient descent technique, and the optimum weight vector and bias of every layer have been attained.

Regularized ELM model

As an SLFN, ELM features \(M\) training samples29. \(\left\{\left({x}_{j}, {t}_{j}\right),j=1, \cdots , M\right\},{x}_{j}=\{{x}_{1}, {x}_{2}, \cdots , {x}_{m}{\}}^{T},{t}_{j}=\{{t}_{1}, {t}_{2}, \cdots , {t}_{n}{\}}^{T},{x}_{j},{t}_{j}\) indicate the input and output vectors of \({j}^{th}\) samples, correspondingly. The activation function is \(\left(w, b, x\right)\), and the HL node is \(L\) and t. The architecture of ELM comprises \(n\) output neurons, \(m\) input neurons, and \(L\) hidden neurons:

$${t}_{j}={\sum }_{i=1}^{L}{\beta }_{i}{g}_{i}\left({w}_{i}\cdot {x}_{j}+{b}_{i}\right)j=1, \cdots , M$$

(15)

In Eq. (15), \({\beta }_{i}=[{\beta }_{i1}, {\beta }_{2}l, \cdots , {\beta }_{iL}{]}^{T}\) shows the connecting weight vector of \({i}^{th}\) hidden neurons to the output layer, \({W}_{i}=\{{W}_{i1}, {W}_{2}l, \cdots , {W}_{iL}{\}}^{T}\) signifies the connecting weight vector of \({i}^{th}\) hidden neurons to the input layer, and \({b}_{i}\) represents the bias of \({i}^{th}\) hidden nodes, each of them is produced at random.

$$H=\left(\begin{array}{cccc}g({\omega }_{1},{b}_{1},{x}_{1})& g({\omega }_{2},{b}_{2},{x}_{1})& \dots & g({\omega }_{L},{b}_{L},{x}_{1})\\ g({\omega }_{1},{b}_{1},{x}_{2})& g({\omega }_{2},{b}_{2},{x}_{2})& \dots & g({\omega }_{L},{b}_{L},{x}_{2})\\ \vdots & \vdots & \vdots & \vdots \\ g({\omega }_{1},{b}_{1},{x}_{\text{N}})& g({\omega }_{2},{b}_{2},{x}_{\text{N}})& \dots & g({\omega }_{L},{b}_{L},{x}_{2})\end{array}\right)$$

(17)

Equation (17) is substituted to Eq. (16) and is attained by singular value and least square decomposition:

$$\beta =({H}^{T}H{)}^{-1}{H}^{T}T$$

(18)

The regularization coefficient enhances the structural stability of ELM and produces RELM:

$$\beta =({H}^{T}H+CI{)}^{-1}{H}^{T}T$$

(19)

In Eq. (19), \(I\) shows the unit matrix, and \(C\) indicates the regularization factor.

BiLSTM model

The BiLSTM plays an important role where each element in the input signal fuses the corresponding data from the past and present30. In such cases, it produces better output. The linear DL method \(fd \left(si, vx\right)={\sum }_{bc=1}^{hd}s{i}_{bc}v{x}_{bc}\), where the input is called \(hd\), along with the term \(vx,fd(\cdot )\), indicates the weight and output of networks. The BiLSTM model has two LSTM layers. The LSTM layer was trained with the input series in the forward direction. In the reserve order, the input series was given to train an additional LSTM layer in the backward direction. This input sequence has the training data’s real and imaginary parts. The LSTM is used to resolve the gradient problems in the RNN based on long data series.

$$r{v}_{mn}=\phi \left({R}_{rv}d{a}_{mn}+{V}_{rv}g{d}_{mn-1}+h{a}_{rv}\right)$$

(20)

$$v{r}_{mn}=tanh\left({R}_{vr}d{a}_{mn}+{V}_{vr}g{d}_{mn-1}+h{a}_{vr}\right)$$

(21)

$$t{i}_{mn}=\nu \left({R}_{ti}d{a}_{mn}+{V}_{ti}g{d}_{mn-1}+h{a}_{ti}\right)$$

(22)

$$h{o}_{mn}=\nu \left({R}_{ho}d{a}_{mn}+{V}_{ho}g{d}_{mn-1}+haho\right)$$

(23)

Here,\({R}_{vr},{ R}_{rv},{ R}_{ti},{ and R}_{ho}\) are the weight matrices on the input state. The weight matrix from the prior short-term \(g{d}_{mn-1}\) is represented as \({V}_{vr},{ V}_{rv},{ V}_{ti},{ and V}_{ho}.\) The variables \(h{a}_{vr},h{a}_{rv},h{a}_{ri}, and ha{h}_{0}\) are represented as biased. The long‐term state \(c{d}_{mn}\) is represented as follows

$$c{d}_{mn}=r{v}_{mn}\otimes c{d}_{mn-1}+t{i}_{mn}\otimes v{r}_{mn}$$

(24)

Lastly, the output \({f}_{mn}\) is formulated by:

$$y{f}_{mn}=g{d}_{mn}=h{o}_{mn}\otimes tanb\left(cd\right)$$

(25)

where \(c{d}_{mn-1}\) is a variable in the prior long-term state,