Image by author

Rust Burn is a new deep learning framework written entirely in the Rust programming language. The motivation behind creating a new framework, rather than using existing frameworks such as PyTorch or TensorFlow, is its versatility, making it suitable for a variety of users, including researchers, machine learning engineers, and low-level software engineers. is to build a high-quality framework.

The key design principles behind Rust Burn are flexibility, performance, and ease of use.

flexibility It comes from the ability to quickly implement cutting-edge research ideas and run experiments.

performance This is achieved through optimizations such as leveraging hardware-specific features such as Tensor Cores on Nvidia GPUs.

ease of use The idea is to simplify the workflow of training, deploying, and running models in production.

Major features:

- Flexible and dynamic computational graphs

- thread-safe data structures

- Simplify the development process with intuitive abstractions

- Blazingly fast performance during training and inference

- Supports multiple backend implementations on both CPU and GPU

- Full support for logging, metrics and checkpoints during training

- Small but active developer community

Installing Rust

Burn is a powerful deep learning framework based on the Rust programming language. A basic understanding of Rust is required, but once you do, you can take advantage of all the features Burn has to offer.

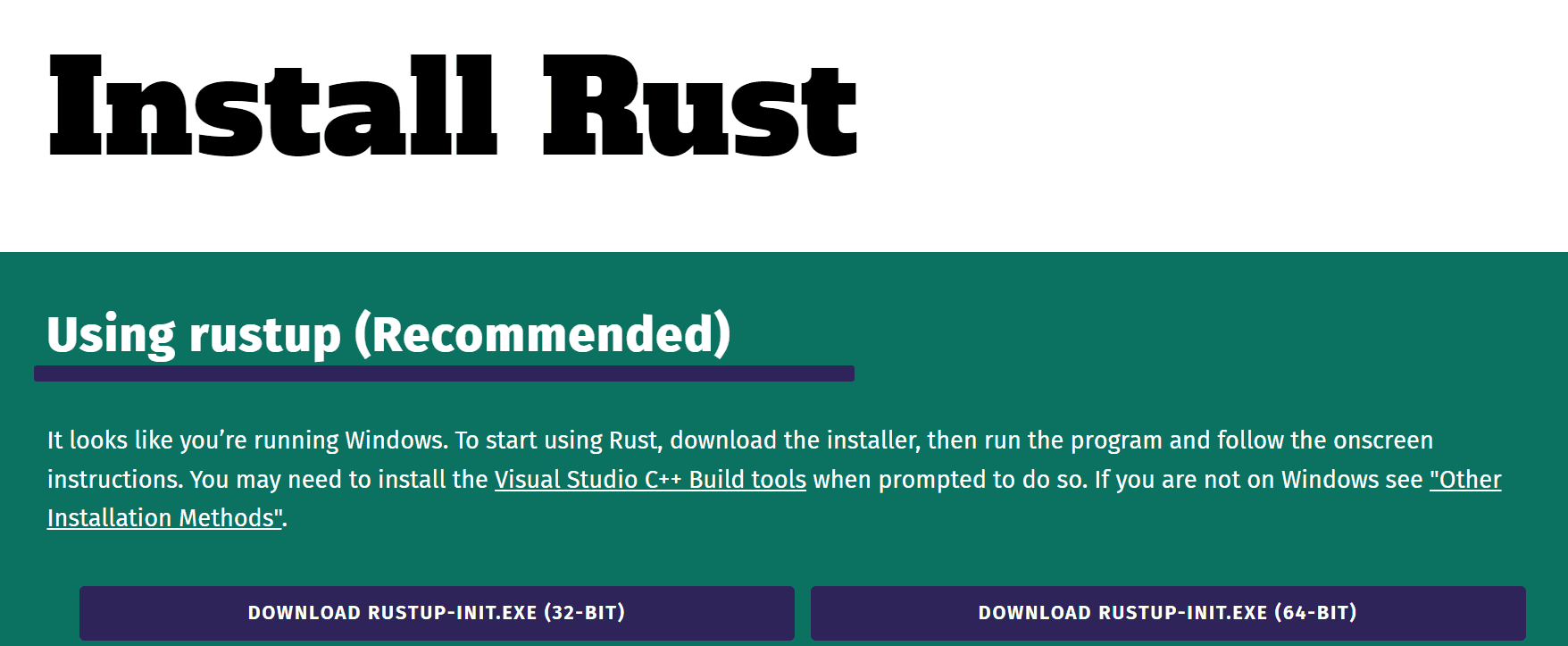

To install using the official guide. Also check out the GeeksforGeeks guide to installing Rust on Windows and Linux (with screenshots).

Image from Rust installation

Installing Burn

To use Rust Burn, you must first install Rust on your system. Once Rust is properly configured, you can create a new Rust application using: cargoA package manager for Rust.

Run the following command in your current directory:

Change to this new directory.

Next, add Burn as a dependency, along with the WGPU backend functionality to enable GPU operations.

cargo add burn --features wgpuFinally, compile the project and install Burn.

This will install the Burn framework along with the WGPU backend. WGPU allows Burn to perform low-level GPU operations.

element-wise addition

To run the following code, you must open it and replace the content. src/main.rs:

use burn::tensor::Tensor;

use burn::backend::WgpuBackend;

// Type alias for the backend to use.

type Backend = WgpuBackend;

fn main() {

// Creation of two tensors, the first with explicit values and the second one with ones, with the same shape as the first

let tensor_1 = Tensor::::from_data([[2., 3.], [4., 5.]]);

let tensor_2 = Tensor::::ones_like(&tensor_1);

// Print the element-wise addition (done with the WGPU backend) of the two tensors.

println!("{}", tensor_1 + tensor_2);

} In the main function, we created two tensors on the WGPU backend and performed the addition.

To run the code you need to run cargo run Inside the terminal.

output:

You can now view additional results.

Tensor {

data: [[3.0, 4.0], [5.0, 6.0]],

shape: [2, 2],

device: BestAvailable,

backend: "wgpu",

kind: "Float",

dtype: "f32",

}Note: The following code is an example of “Burn Book: Getting starting”.

Position wise feedforward module

Here’s an example that shows how easy it is to use the framework. Use this code snippet to declare a positional feedforward module and its forward path.

use burn::nn;

use burn::module::Module;

use burn::tensor::backend::Backend;

#[derive(Module, Debug)]

pub struct PositionWiseFeedForward<B: Backend> {

linear_inner: Linear<B>,

linear_outer: Linear<B>,

dropout: Dropout,

gelu: GELU,

}

impl PositionWiseFeedForward<B> {

pub fn forward(&self, input: Tensor<B, D>) -> Tensor<B, D> {

let x = self.linear_inner.forward(input);

let x = self.gelu.forward(x);

let x = self.dropout.forward(x);

self.linear_outer.forward(x)

}

} The code above is from the GitHub repository.

project example

To see and run more examples, clone the https://github.com/burn-rs/burn repository and run the project below.

pre-trained model

To build AI applications, you can use the following pre-trained models and fine-tune them on your dataset.

Rust Burn represents an exciting new option in the deep learning framework environment. If you’re already a Rust developer, you can take advantage of Rust’s speed, safety, and concurrency to push the boundaries of what’s possible in deep learning research and production. Burn is committed to finding the right compromise between flexibility, performance, and ease of use to create a unique and versatile framework suitable for a variety of use cases.

Although Burn is still in its early stages, it shows promise in that it addresses pain points in existing frameworks and addresses the needs of a variety of practitioners in the field. As the framework matures and the community around it grows, it may become production-ready on par with established options. Its innovative design and language choices open new possibilities for the deep learning community.

resource

Abid Ali Awan (@1abidaliawan) is a certified data scientist professional who loves building machine learning models. Currently, he is focusing on content creation and writing technical blogs about machine learning and data science technology. Avid holds a master’s degree in technology management and a bachelor’s degree in telecommunications engineering. His vision is to use Graph’s neural networks to build his AI products for students suffering from mental illness.