Skill of the Tmax predictions

The model experiments were evaluated for their skill in predicting the Tmax anomalies over India based on the ACC skill score, RMSE, and their ability to predict extreme Tmax anomalies (exceeding 4 °C). As the skill of the ensemble mean of several models is often higher than that of a single model we generated ensembles of various combinations of model predictions and evaluated their skill in predicting the Tmax anomalies over India in the months March-June. Based on the analysis we identified the configuration of the models with higher ACC and lower RMSE. The model configurations for each month are given in Table 1.

It is interesting to note that of all the models evaluated in the study the AdaBoost with MLP as the base estimator (hereafter AdaBoost(MLP)) performs better than other ML models (Table 2) in all the months in predicting Tmax anomalies over India (Table 1). Also, as expected we found the ensemble mean of the predictions to be skillful in all the months (Table 1). In March the average of AdaBoost(MLP) predictions with the number of neurons varying from 2 to 20 was found to give optimal results. In April the ensemble average of the predictions from AdaBoost(MLP) input processed using Min–Max normalization and PCA, and configured with RELU activation function and ADAM solver and with the number of neurons varying from 15 to 16 is found to give optimal skill in predicting the Tmax anomalies over India (Table 1). The configurations of the model in the months May and June are given in Table 1.

In the months of March and June, the persistence predictions have an ACC skill score of about 0.38 (Table 1). In both these months, the CFS reforecast predicted Tmax anomalies have a high ACC skill score of 0.72 and 0.62 whereas the machine learning model does worse than persistence with ACC skill score of 0.27 in the month of March and performs slightly better than persistence in the month of June (Table 1). These findings indicate that the machine learning models used in the study are not much useful in predicting Tmax anomalies over India in the months of March and June.

The ACC skill score of persistent predictions is low in both April and May (Table 1). The machine learning model AdaBoost(MLP) does better than persistence in predicting the Tmax anomalies over India in both of these months and the ACC skill score is lower, but comparable to that of the CFS reforecast 10-day predictions (Table 1). The modest ACC value of the CFS reforecast indicates the 10-day prediction of Tmax anomalies over India in April and May is challenging. The low skill scores of persistence and CFS predictions in April and May maybe indicating a prediction barrier in these months, which needs further investigation. We further evaluated the predictions of machine learning models for the months of April and May and the results are discussed in the following sections.

Frequency distribution of Tmax predictions

The machine learning model for predicting daily Tmax anomalies should realistically predict both the negative and positive anomalies to be useful for real-time forecasting. So, we compared the first four statistical moments (mean, standard deviation, skewness, and kurtosis) along with the 95% cutoff low and high of the time series of predicted Tmax anomalies with the observed Tmax anomalies of IMD. Models with similar statistical properties to those of IMD Tmax anomalies are considered to be adequate for predictions.

The time series of the IMD area averaged Tmax anomalies over the region of large standard deviation in the northern parts of India (Fig. 1b) in Apr over the period 1999–2020, has a standard deviation of 2.8°C, and is slightly negatively skewed (− 0.30), with kurtosis of − 0.14 (Fig. 2a). The predicted Tmax anomalies in April of both CFS (Fig. 2b) and AdaBoost(MLP) (Fig. 2c) have biases in the first four statistical moments compared to the IMD Tmax anomalies. The Tmax anomalies of the CFS predictions are slightly positively skewed (0.19) with a kurtosis of -0.70 relative to the normal distribution (Fig. 2b). Also, the 95% cutoff low and high values of the predicted time series are small (− 4.2 and 4.2) compared to the IMD values (− 4.8 and 6.2). The AdaBoost (MLP) predicted Tmax anomalies are positively skewed and the model has difficulty in predicting the negative Tmax anomalies (Fig. 2c) in April. The 95% cutoff low and high values (-2.9 and 3.5) are smaller than that of the IMD values.

Frequency distribution (Number of days versus Tmax anomaly ranges) of time series of (a) IMD, (b) CFS, (c) AdaBoost(MLP) in April, (d) IMD, (e) CFS, (f) AdaBoost(MLP) in May over Reg1, (g) IMD, (h) CFS, and (i) AdaBoost(MLP) in May over Reg2.

In May there are two regions of large standard deviation in Tmax, one located over the northern parts of India (Reg1) and the other over the southern parts (Reg2) of India (Fig. 1c). The area-averaged standard deviations of the IMD observed Tmax anomalies over the regions Reg1 and Reg2 are 2.5°C (Fig. 2d) and 2.2°C (Fig. 2g), respectively over the period 1999–2020. The time series over both regions are slightly negatively skewed with values of -0.54 and -0.84. Also, the time series are leptokurtic with Reg2 having a higher value (1.81) compared to Reg1 (0.29) indicating the time series of Reg2 has fatter tails and a narrow peak in the frequency distribution. The 95% cutoff range of Tmax anomaly is also higher over Reg1 (5.5°C) compared to Reg2 (4.4°C) indicating the extreme temperatures over Reg1 to be higher compared to Reg2. The Tmax anomalies of the CFS 10-day predictions over both Reg1 (Fig. 2e) and Reg2 (Fig. 2h) have a 95% high cutoff that is smaller compared to that of IMD value indicating the CFS model fails to predict the extreme temperatures in May. The 95% high cutoff of AdaBoost(MLP) over Reg1 (Fig. 2f) and Reg2 (Fig. 2i) is higher than that of the CFS predicted values though lower than that of the IMD values over Reg11. For Reg2, the AdaBoost(MLP) predicted (Fig. 2i) more days with Tmax anomalies exceeding 5°C compared to the IMD Tmax anomalies (Fig. 2g).

The above analysis indicates the predicted Tmax anomalies have biases in the frequency distribution for various ranges of temperature. However, it is evident from Fig. 2 that the models could generate extreme Tmax anomalies, exceeding 4 °C, in both April and May over India. We analyze those in the following section.

Hit rate versus False alarm rate

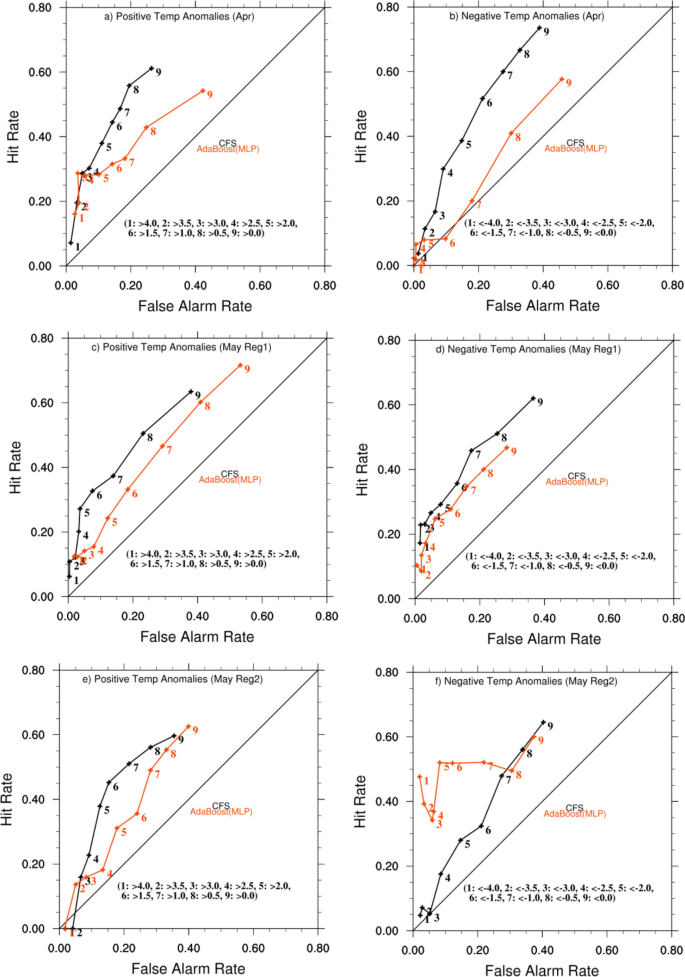

The prediction of extreme Tmax anomalies by the models does not guarantee that the model predictions are accurate, as there may be many false alarms in the predicted daily values with mismatches in the predicted daily Tmax anomalies. We examined the hit rate (HR) vs. false alarm rate (FAR)25 of the predicted time series to see if the sign and magnitude of the predicted daily Tmax anomalies on a given day matched those of the IMD observed on that day. HR is defined as HR = hit/(hit + miss), where a hit is when an event (Tmax anomaly of particular magnitude and sign on a particular day) occurred and was successfully predicted, miss is when an event occurred but was not predicted, and FAR = (false alarm)/(false alarm + correct rejection), where a false alarm is when an event was predicted but did not occur and correct rejection is when an event did not occur and was not predicted. A prediction is considered to be skillful if HR is greater than FAR. The HR and FAR were calculated for both positive and negative predicted Tmax anomalies for various threshold values. For positive Tmax anomalies the HR and FAR were calculated for the threshold values and the results of the analysis are shown in Fig. 3.

The left and right panels show hit rate vs. false alarm rate for positive and negative temperature anomalies, respectively, for predictions made by CFS (black) and AdaBoost(MLP) (red) models in April (a, b), May-Reg1 (c, d), May-Reg2 (e, f) from top to bottom. The positive temperature anomalies are defined as temperatures above 0.0, 0.5, 1.0, 1.5, 2.0, 2.5, 3.0, 3.5, and 4.0 °C, and are shown with numbers 9, 8, 7….1 on the plot as indicated in each panel. Similarly, the negative temperature anomalies are defined as temperatures below 0.0, − 0.5, − 1.0, − 1.5, − 2.0, − 2.5, − 3.0, − 3.5, and − 4.0 °C, and are also shown with numbers 9, 8, 7….1 on the plot.

The AdaBoost(MLP) has lower HR compared to CFS for smaller positive temperature thresholds from > 0.0 °C to > 2.5 °C but performs better than the CFS model for thresholds above 3.0 °C (Fig. 3a). For a threshold of > 3.0 °C, the AdaBoost(MLP) model has 35 hits, 87 misses, 18 false alarms and 403 correct rejections, with a HR value of 0.286 and FAR value of 0.03 whereas the CFS model has 35 hits, 87 misses, 21 false alarms and 397 correct rejection with a HR of 0.286 and FAR of 0.05. For threshold > 4.0 °C the AdaBoost(MLP) has HR of 0.16 ( 9 hits and 47 misses) and FAR of 0.02 (13 false alarms and 471 correct rejections) whereas CFS has HR of 0.07 (4 hits and 52 misses) and FAR of 0.01 (7 false alarm and 477 correct rejection) (Fig. 3a). These indicate the AdaBoost(MLP) does slightly better than CFS in predicting the extreme positive Tmax anomalies over India in April. The AdaBoost(MLP) has a large bias in predicting extreme negative Tmax anomalies over India (Fig. 2c) which is also reflected in the plot of HR vs FAR for the negative Tmax anomalies (Fig. 3b). The HR is comparable to FAR for all the ranges of thresholds for the negative Tmax anomalies of the AdaBoost(MLP) model indicating the model has no skill in predicting the negative Tmax anomalies in April. The CFS model has higher HR compared to FAR for all the ranges of negative temperature anomalies (Fig. 3b).

In May, over Reg1, the CFS has higher HR compared to AdaBoost(MLP) for the positive Tmax anomalies for the thresholds from > 0.0 °C to > 3.0 °C (Fig. 3c). However for the thresholds > 3.5 °C and > 4.0 °C the AdaBoost(MLP) has higher HR compared to the CFS reforecast predictions along with higher FAR (Fig. 3c). For threshold > 3.5 °C, AdaBoost(MLP) has HR of 0.12 (8 hits and 57 misses) and FAR of 0.02 (14 false alarm and 479 correct rejection) whereas CFS has HR of 0.11 (7 hits, 58 misses) and FAR of 0.004 (2 false alarm and 491 correct rejection). The AdaBoost(MLP) has an HR of 0.12 (4 hits and 28 misses) and FAR of 0.02 (10 false alarms and 516 correct rejections) and CFS has an HR of 0.06 (2 hits and 30 misses) and FAR of 0.004 (2 false alarm and 524 correct rejection) for threshold > 4 °C (Fig. 3c). The results indicate the performance of CFS and AdaBoost(MLP) is comparable in predicting the extreme positive temperature anomalies though AdaBoost(MLP) has a slightly higher number of false alarms compared to CFS. Both CFS and AdaBoost(MLP) have higher HR compared to FAR over all the thresholds in the predicted negative Tmax anomalies with CFS reforecast performing better with higher HR and lower FAR compared to AdaBoost(MLP) predictions (Fig. 3d).

Both CFS and AdaBoost(MLP) failed to predict the extreme temperatures > 4.0 °C in May over Reg2 (Fig. 3e). The AdaBoost(MLP) has an HR of 0.00 (0 hits and 8 misses) and FAR of 0.01 (9 false alarms and 541 correct rejections) whereas CFS has an HR of 0.00 (0 hits and 8 misses) and FAR of 0.02 (10 false alarms and 540 correct rejection) for temperature threshold > 4.0 °C. For the temperature threshold of > 3.5 the AdaBoost(MLP) has slightly higher HR compared to CFS. The AdaBoost(MLP) has HR of 0.13 (3 hits and 19 misses) and FAR of 0.05 (27 false alarm and 509 correct rejection) whereas CFS has HR of 0.00 (0 hits and 22 misses) and FAR of 0.04 (22 misses and 514 correct rejection) for threshold of > 3.5 °C. For other positive temperature thresholds > 0.0 °C to > 3.0 °C the CFS has higher HR and lower FAR compared to the AdaBoost(MLP) predictions. The AdaBoost(MLP) has higher HR and lower FAR in predicting the negative Tmax anomalies in May over Reg2 (Fig. 3f).

The above analysis shows that the machine learning model AdaBoost(MLP) is suitable for predicting the extreme positive temperatures over India in April and May. AdaBoost(MLP) has performance similar to that of CFS in predicting extreme positive temperatures > 3.5 °C and > 4.0 °C in April and May over India.

Feature importance of the input attributes in the Tmax anomaly prediction

As discussed in the previous section, the AdaBoost(MLP) model shows good skill in predicting extreme Tmax anomalies over India in April and May. The skills are comparable to the CFS reforecast predictions. In this section we attempt to better understand the features which have contributed to the prediction of the Tmax anomalies in the AdaBoost(MLP) models, thereby getting an idea of the variables important for predicting the Tmax anomalies. For this, we used the permutation feature importance26 technique, a tool that is part of scikit-learn software. The permutation importance of an input attribute is calculated by randomly shuffling the attribute and measuring the decrease in model score. A large drop in score indicates the input attribute to be relatively important for the model prediction. After calculating the feature importance of the attributes in predicting Tmax anomalies for all the years 1982–2020, the input attributes were ranked. The feature with the higher rank is considered to have contributed relatively more to the model predictions. The ranking of the input attributes for the identified models and their variation over the years is shown in Fig. 4a–e. As discussed before, the input attributes were derived based on correlation analysis. The correlation does not imply causation. The input attributes may be just statistical artifacts and may not be really responsible for causing variation of Tmax anomalies over India. To identify the input attributes which would have caused the variations of Tmax anomalies over India we applied the Granger causality test to the input attributes with higher ranks (rank < 5) as these input attributes would have a relatively higher effect on the predictions. Granger-causality is a statistical technique that is helpful to determine if one time series is likely to influence the change in another i.e., if one time series can be used to predict the other time series. In our study, we used the “grangertest” function of “lmtest” package of “R software” to implement the Granger causality test. The physical processes through which the input attributes, identified through Granger causality, contribute to the Tmax variations of Tmax over India can be investigated through numerical model experiments, which we intend to carry out in future studies.

a) Ranking of input attributes using permutation feature importance techniques for April, b) Input variables contributing most to the principal components PC0, PC1, PC2 and PC3 over the period 1982–2020 (c) same as (a) but for May Reg1 (d) same as (b) but for May Reg1 and (e) same as (a) but for May Reg2. The whiskers show the variation of rank in predicting Tmax anomalies from 1982 to 2020.

In April, PCA was applied to the input after Min–Max normalization before feeding the data to the AdaBoost(MLP) model (Table 1), with preserving 95% of the variance in the data as mentioned in methods section. The number of components selected by the algorithm varied from 15 to 17 for the predictions of April Tmax anomalies for the period 1982 to 2020 using leave-one-year-out cross-validation. The explained variance ratio i.e. the percentage of variance explained by each of the selected components for one of the years is for example 0.2817, 0.1661, 0.0849, 0.0677, 0.0560, 0.0473, 0.0454, 0.0355, 0.0316, 0.0265, 0.0240, 0.0218, 0.0179, 0.0168, 0.0157, 0.0124) ie the PC0 explains about 28.2%, PC1 explains about 16.6%, PC2 explains about 8.5% and so on. We obtained the relative importance of each of the principal components using the permutation feature importance technique and the ranks of the principal components contributing most to the April predictions are shown in Fig. 4a. Only the ranks for PC0-PC14, which are common in all the predictions, are shown in Fig. 4a. As expected, the PC0 which explains a large variance (about 28%) is relatively more important compared to other principal components, followed by PC1, PC3 and PC2. The PC4-PC15 contributions have large variations in the ranking in the predictions (Fig. 4a). After identifying the relatively important principal components we identified the input variables which are most important to those principal components by using the “component_” attribute of Scikit-learn PCA implementation. The input variable with a large “explained_variance_” is output by the attribute and considered the most important input variable contributing to that principal component. We obtained such values for all the predictions and the results are shown in Fig. 4b.

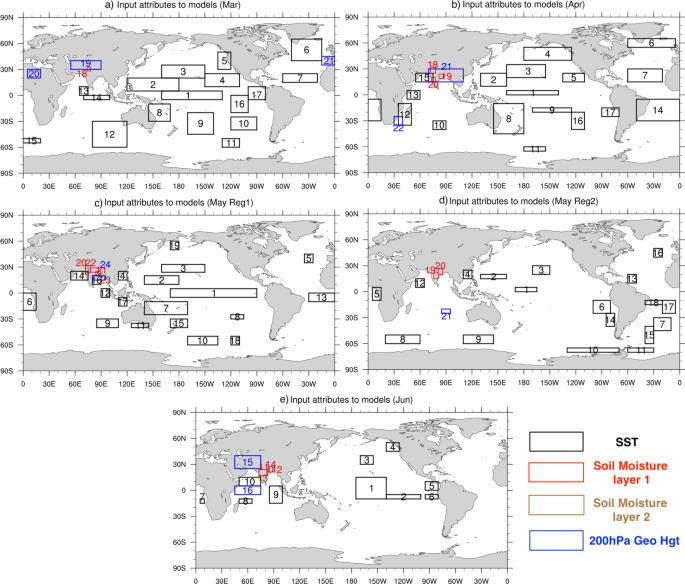

The input attribute over region 6 and region 8 of Fig. 5b contribute most to the principal component PC0 (Fig. 4b), region 1 contributes most to PC1, region 12 and region 17 contribute most to PC2 though region 6 and 11 contribute in two of the years (Fig. 4b) and region 17 contributes most to PC3 (Fig. 4b). We verified using the Granger causality test if the input from these identified regions can Granger cause Tmax anomalies over India. Of the identified regions we find that regions 6, 8, and 17 can Granger cause Tmax anomalies over India. For other regions (1 and 12) the causality test is not statistically significant so it is difficult to explain physically how the input from these regions would have contributed to the prediction of Tmax anomalies over India. The physical mechanism through which SST over regions 8 and 17 can cause variation in Tmax anomalies over northern parts of India is not clear and needs model experiments to clarify their influence. The SST variation over region 6 is mostly a response to the variation in the atmospheric processes and those atmospheric processes can propagate to the northern parts of India and cause variations in the temperature over India27.

Regions of significant correlation between the Tmax anomalies over the regions of large standard deviation over India (marked in Fig. 1) and spatial distribution of 10-day lead SST, Soil moisture, and 200 hPa geopotential height anomalies in (a) March, (b) April, (c) May (Reg1), (d) May (Reg2) and e) June. The area averages of the variables over the regions indicated by the boxes are the input attributes to the models.

PCA was applied to the input after standardization before providing the data to the AdaBoost(MLP) model (Table 1) in May for predicting Tmax over Reg1. The number of components selected by the algorithm varied from 15 to 17 for the predictions of May Tmax anomalies over Reg1 for the period 1982 to 2020 using leave-one-year-out cross-validation.

The relative importance of each of the principal components using the permutation feature importance technique and the ranks of the principal components contributing most to the May predictions are shown in Fig. 4c. Only the ranks for PC0-PC14, which are common in all the predictions, are shown in Fig. 4c. The PC0 which explains a large variance (about 25%) is relatively more important compared to other principal components, followed by PC1, PC3, and PC2.

The regions 7, 1, 21, 23,19, 8, and 10 shown in Fig. 5c contribute most to PC0, PC1, PC2, and PC3 (Fig. 4d). Granger causality test shows regions 1, 7, 19 and 10 can Granger cause Tmax anomalies over India. Region 1, located over the equatorial Pacific (Fig. 5c), can affect the Tmax anomalies over India through an atmospheric teleconnection in response to the heating associated with the SST anomalies over region 1 and can extend to the northern parts of India and affect the Tmax variations over Reg1 in May. However, the physical processes through which the other regions can cause variations of Tmax over India need to be understood through numerical model experiments.

Of the regions shown in Fig. 5d, SST anomalies over regions 8, 18, 17, 6 19, and 13 are relatively more important in the prediction of Tmax anomalies over India in May over Reg 2 compared to the input from other regions (Fig. 4e). The Granger causality test showed that regions 6 of the above six regions to Granger cause Tmax anomalies over the coastal regions of India. The physical processes through which the SST anomalies over region 6 can affect the Tmax anomalies over India are not clear and careful model experiments are needed to understand the physical processes.