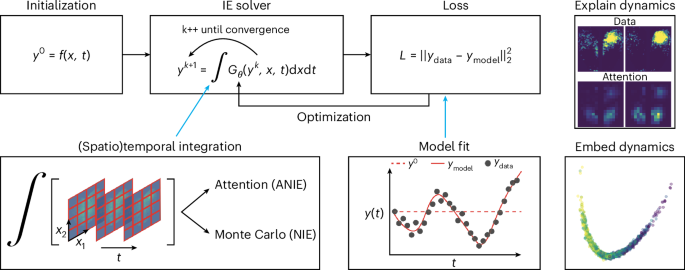

We give here a detailed account of the implementation of the NIE and ANIE models (one-dimensional (1D) and (n + 1)-dimensional IEs, respectively). More specifically, we provide a more thorough description of Algorithms 1 and 2 for solving the IEs associated with neural networks G (feed-forward) and \({\mathfrak{Att}}\) (transformer), and contextualize these algorithms in the optimization procedure that learns the neural networks.

Implementation of NIE

We only consider the case of equation (1), as the case where the function G splits in the product of a kernel K and the (possibly) nonlinear function F is substantially identical. We observe that the main components of the training of NIEs are two. An optimization step that targets G, and a solver procedure to obtain a solution associated with the IE individuated by G, or more precisely, the integral operator that G defines. Therefore, we want to solve equation (1) for a fixed neural network G, determine how far this solution is from fitting the data and optimize G in such a way that at the next step, we obtain a solution that more accurately fits the data. At the end of the training, we have a neural network G that defines an integral operator and, in turn, an IE, whose associated solution(s) approximates the given data.

To fix the notation, let us call X as the dataset for training. This contains several instances of n-dimensional curves with respect to time. In other words, we consider \(X={\{{X}_{i}\}}_{i\le N}\), where N is the number of instances and \({X}_{i}=\{{{\bf{x}}}_{0}^{i},\ldots ,{{\bf{x}}}_{m}^{i}\}\), where each \({{\bf{x}}}_{i}\in {{\mathbb{R}}}^{q}\) is a q-dimensional vector, and the sequence of \({{\bf{x}}}_{k}^{i}\) refers to a discretization of the time interval where the curves are defined. For simplicity, we assume that time points are taken in [0, 1]. The neural network G defining the integral operator will be denoted by Gθ, to explicitly indicate the dependence of G on its parameters. The objective of the training is to optimize θ in such a way that the corresponding Gθ defines an IE whose solutions yi(t) corresponding to different initializations pass through the discretized curves xi(t).

Let us now consider one training step n, where the neural network \({G}_{{\theta }_{n}}\) has weights obtained from the previous training steps (or randomly initialized if this is the first step). We need to solve the IE

$${\bf{y}}=f

(5)

associated with the integral operator \(\mathop{\int}\nolimits_{\!\alpha

(6)

where \({{\mathfrak{Att}}}_{\theta }({\bf{y}},{\bf{x}},t)\) is an approximation of an integral operator \(\int\nolimits_{0}^{T}\mathop{\int}_{\Omega }\)\(G({\bf{y}},{\bf{x}},{{\bf{x}}}^{{\prime} },t,s)d{{\bf{x}}}^{{\prime} }ds\) for some G. The solver is initialized through the free function f(x, t), which plays the role of the first ‘guess’ for the IE solution. Observe that since f is evaluated on the full discretization of Ω × [0, T], then the length m of the sequence of vectors that approximates f(x, t) equates the product of the number of space points sk for each dimension and time point tr. The solver, therefore, creates its first approximation by setting y0(x, t) = f(x, t). Then, for the first iteration of the solver, we create the new sequence \({\tilde{{\bf{y}}}}^{0}\) by concatenating to each y and the spatiotemporal m coordinates (xs, tr). Now, \(\tilde{{\bf{y}}}\) consists of a sequence of m = mTmΩ vectors (one per spacetime point), which also possess spacetime encoding (through concatenation). Supplementary Fig. 1 shows a schematic of the integration procedure through a transformer. Then, we set

$${\tilde{{\bf{y}}}}^{1}({\bf{x}},t)=f({\bf{x}},t)+{{\mathfrak{Att}}}_{\theta }({\tilde{{\bf{y}}}}^{0}),$$

where the dependence of \({\tilde{{\bf{y}}}}^{1}\) on spacetime coordinates x and t indicates that we have one vector \({\tilde{y}}^{1}\) per spacetime coordinate. If q is the dimension of the dynamics (that is, the number of channels per spacetime point), then the sequence \({\tilde{{\bf{y}}}}^{1}\) consists of vectors of dimension q + d + 1, where d is the number of space dimensions. This happens because \({\tilde{{\bf{y}}}}^{1}\) is the output of a transformer of a sequence obtained by a sequence of q-dimensional vectors concatenated with a (d + 1)-dimensional sequence. The two simplest options are either to discard the last d + 1 dimensions of the vectors or add an additional linear layer that projects from q + d + 1 dimensions to q. As tests have not shown a significant difference between the two approaches, we have adopted the former. Consequently, we obtain the one-dimensional sequence z1(x, t). Last, we set y1(x, t) = ry0 + (1 − r)z1, where r is a smoothing factor that is a hyperparameter of the solver. One, therefore, computes the error m(y0, y1) between the initial step and the second one to quantify the degree of change in the new approximation, where m(•, •) is a global error metric fixed throughout.

Now, we iterate the same procedure and assuming that the approximation yi to the equation has been obtained, we then concatenate the spacetime coordinates to obtain \({\tilde{{\bf{y}}}}^{i}\) and set

$${\tilde{{\bf{y}}}}^{i+1}({\bf{x}},t)=f({\bf{x}},t)+{{\mathfrak{Att}}}_{\theta }({\tilde{{\bf{y}}}}^{i}),$$

which we use to obtain zi+1 (by deleting the last d + 1 dimensions). Then, we set yi+1 = ryi + (1 − r)zi+1 and compute the global error m(yi, yi+1). Supplementary Fig. 2 shows a solver step integration in detail.

In practice, the number of iterations for the solver is a fixed hyperparameter that we have set between 3 and 5 in our experiments. This has been sufficient to achieve good results, as well as to learn a model that is stable under the solving procedure described above. Since the solver is fully implemented in PyTorch and the model that approximates the integral operator is a transformer, we can simply backpropagate through the solver at each epoch, after we have solved for y and compared the solution with the given data \({\{{X}_{i}\}}_{i\le N}\).

We complete this subsection with a more concrete description of the motivations for approximating integration through the mechanism of self-attention. Very similar perspectives have appeared in other sources21,34, but we provide a formulation of such considerations that more easily fit the perspectives of integral operators for IEs used in this Article. This also serves as a more explicit description of \({\mathfrak{Att}}\) found in Algorithm 2.

We consider an n-dimensional dynamics y(x, t) depending on space x ∈ Ω (for some domain Ω) and time t ∈ [0, 1]. The queries, keys and values of self-attention can be considered as maps \({\psi }_{W}:{{\mathbb{R}}}^{n+1}\times \Omega \times [0,1]\longrightarrow {{\mathbb{R}}}^{1\times d}\), where d is the latent dimension of the self-attention, and W = Q, K and V for queries, keys and values, respectively. Then, (for W = Q, K, V), we have

$$W\,[{\bf{y}}| {\bf{x}}| t]=\left[\begin{array}{c}{\psi }_{W}^{0}[{\bf{y}}| {\bf{x}}| t]\\ \vdots \\ {\psi }_{W}^{i}[{\bf{y}}| {\bf{x}}| t]\\ \vdots \\ {\psi }_{W}^{d-1}[{\bf{y}}| {\bf{x}}| t]\end{array}\right],$$

where [y∣x∣t] indicates the concatenation of the terms in the bracket. Let us now consider the ‘traditional’ quadratic self-attention. Similar considerations also apply for the linear attention used in the experiments, mutatis mutandis. The product between queries and keys gives

$$\left[\left(\cdots {\psi }_{Q}^{i}\left[{\bf{y}}| {\bf{x}}| t\right]\cdots \right)\cdot \left(\cdots {\psi }_{K}^{j}\left[{\bf{y}}| {\bf{x}}| t\right]\cdots \right)^{\rm{T}}\right]_{ij}=\left({\psi }_{Q}^{i}\cdot {\hat{\psi }}_{K}^{j}\right),$$

where T indicates transposition and \(\hat{\psi }\) indicates the columns of the transposed matrix. Then, if z is the output of the self-attention layer (observe that this consists of (zi)j, where i indicates a spatiotemporal point and j indicates the jth dimension of the n-dimensional dynamics). Then, we have

$${({{\bf{z}}}_{i})}_{j}=\sum _{j}\left({\psi }_{Q}^{i}\cdot {\hat{\psi }}_{K}^{j}\right){\psi }_{V}^{j}\approx {\int}_{\!\Omega \times [0,1]}K({\bf{y}},{\bf{x}},t,{{\bf{y}}}^{{\prime} },{{\bf{x}}}^{{\prime} },{t}^{{\prime} })F({{\bf{y}}}^{{\prime} },{{\bf{x}}}^{{\prime} },{t}^{{\prime} }){\rm{d}}{{\bf{x}}}^{{\prime} }{\rm{d}}{t}^{{\prime} },$$

where the prime symbols indicate the variables we are summing on (this is why the are ‘being integrated’ in the integral), and y is evaluated at x and t whereas y′ is evaluated at x′ and t′.

Additional experiments

Benchmark of (A)NIE training speed

Neural ordinary differential equations (NODEs) can be slow and have poor scalability46. As such, several methods have been introduced to improve their performance46,47,48,49,50. Despite these improvements, a NODE is still significantly slower than discrete methods such as LSTMs. We hypothesize that an (A)NIE has significantly better scalability than a NODE, comparable with fast but discrete LSTMs, despite being a continuous model. To test this, we compare NIE and ANIE with the latest optimized version of (latent) NODE51 and to LSTM on three different dynamical systems: Lotka–Volterra equations, Lorenz system and IE-generated two-dimensional (2D) spirals (see the ‘Artificial dataset generation’ section for the data generation details). During training, models were initialized with the first half of the data and were tasked to predict the second half. The training speeds are reported in Supplementary Table 1. Although all the models achieve comparable (good) fits to the data, we find that ANIE outperforms all the models in two out of the three datasets in terms of speed. Furthermore, ANIE has better MSE compared with all the other models.

Hyperparameter sensitivity benchmark

For most deep learning models, including NODEs, finding numerically stable solutions usually requires an extensive hyperparameter search. Since IE solvers are known to be more stable than ODE solvers, we hypothesize that (A)NIE is less sensitive to hyperparameter changes. To test this, we quantify the model fit, for the Lotka–Volterra dynamical system, as a function of two different hyperparameters: learning rate and L2 norm weight regularization. We perform this experiment for three different models: LSTM, latent NODE and ANIE. As shown in Supplementary Fig. 3, we find that ANIE generally has a lower validation error as well as more consistent errors across hyperparameter values, compared with LSTM and NODE, thereby validating our hypothesis.

Modelling 2D IE spirals

To further test the ability of ANIE in modelling non-local systems, we benchmark ANIE, NODE and LSTM on a dataset of 2D spirals generated by IEs. These data consist of 500 2D curves of 100 time points each. The data were split in half for training and testing. During training, the first 20 points were given as the initial condition and the models were tasked to predict the full 100-point dynamics. Details on the data generation are described in the ‘Artificial dataset generation’ section. For ANIE, the initialization is given via the free function f, which assumes the values of the first 20 points and sets the remaining 80 points to be equal to the value of the 20th point. For NODEs, the initialization is given as the RNN on the first 20 points, which outputs a distribution corresponding to the first time point (details on latent ODE experiments are provided elsewhere30). For the LSTM, we input the data in segments of 20 points to predict the consecutive point of the sequence. The process is repeated with the output of the previous step until all the points of the curve are predicted. During inference, we test the models’ performance on never-before-seen initial conditions. Extended Data Table 4 shows the correlation between the ground-truth curve and the model predictions. Extended Data Fig. 5 shows the correlation coefficients for the 500 curves. In summary, ANIE significantly outperforms the other tested methods in predicting IE-generated non-local dynamics.

Solver convergence

We now consider the convergence of the solver to a solution of an IE for a trained model. Our experiment here considers a model that has been trained with a number of iterations, and we explore whether the solver iterations converge to a solution at the end of the training. These results show that the model learns to converge to a solution of equation (7) within the iterations that are fixed during training. They show that a fixed point for IE is obtained when outputting a prediction.

Supplementary Fig. 5 and Fig. 3 show the convergence error (that is, the value ∥yn+1 − yn∥), and the guesses produced by the solver during inference (that is, yn for n corresponding to the iteration index), respectively.

Ground-truth data are given at the bottom. Along with the final prediction (step 7), the subsequent solver guesses are shown. The error during the solution generation are reported on the right. The figure also shows that the solver converges when producing the final output (compare with Supplementary Fig. 5).

IEs

IEs are equations where the unknown function appears under the sign of integral. These equations can be given, in general, as

$$\lambda {\bf{y}}=f+T({\bf{y}}),$$

(7)

where T is an integral operator, for example, as in equations (1) and (3), and f is a known term of the equation. In fact, this functional equations have been studied for classes of compact operators T that are not necessarily in the form of integral operators52. We can distinguish two fundamental kinds of equation from the form given in equation (7), which have been extensively studied throughout the years. When λ = 0, we say that the corresponding IE is of the first kind, whereas when λ ≠ 0, we say that it is of the second kind.

In this Article, we formulate our methods based on equations of the second kind for the following important theoretical considerations, which apply to the case where T is bounded over an infinite space (such as the space of functions as we consider in this Article). First, an equation of the first kind can easily have no solution, as the range of a bounded operator T on an infinite space is not the whole space53. Therefore, for choices of f, there is no y such that T(y) = −f, and therefore, equation (7) has no solutions. The other issue is intrinsic to the nature of the equation of the first kind, and does not relate to the existence of solutions. In fact, any compact injective operator T (on an infinite space) does not admit a bounded left inverse53. In practice, this means that if equation (7) has a unique solution for f, then varying f by a small amount can result in very significant variations in the corresponding solution y. This is clearly a potential issue when dealing with a deep learning model that aims at learning operator T from the data. In fact, observations from which T is learned might be noisy, which might result in very considerable perturbations of the solution y and, consequently, considerable perturbations on the operator T that the model converges to. Since equations of the second kind are much more stable, we have formulated all the theory in this setting, and implemented our solver for such equations. The issues relating to the existence and uniqueness of the solution for these equations are discussed in the ‘Existence and uniqueness of solutions’ section.

The theories of IEs and IDEs are tightly related, and it is often the case to reduce problems in IEs to problems in IDEs and vice versa, both in practical and theoretical situations. IEs are also related to differential equations, and it is possible to reformulate problems in ODEs in the language of IEs or IDEs. In certain cases, IEs can also be converted to differential equation problems, even though this is not always possible9,54. In fact, the theory of IEs is not equivalent to that of differential equations. The most intuitive way of understanding this is by considering the local nature of differential equations, as opposed to the non-local origin of IEs. By the non-locality of IEs, it is meant that each spatiotemporal point in an IE depends on an integration over the full domain of the solution function y. In the case of differential equations, each local point depends only on the contiguous points through the local definition of the differential operators.

IE (1D)

We first discuss IEs where the integral operator only involves a temporal integration (that is, 1D), as discussed in the ‘IEs’ section. In analogy with the case of differential equations, this case can be considered as the one corresponding to ODEs.

These IEs are given by an equation of type

$${\bf{y}}

(8)

where f is the free term, which does not depend on y, whereas the unknown function y appears both on the left- and right-hand sides under the sign of the integral. The term \(\mathop{\int}\nolimits_{\alpha

(9)

where \(\Omega \subset {{\mathbb{R}}}^{n}\) is a domain in \({{\mathbb{R}}}^{n}\) and \({\bf{y}}:\Omega \times {\mathbb{R}}\longrightarrow {{\mathbb{R}}}^{m}\). Here m does not necessarily coincide with n.

PIEs and higher-dimensional IEs have been studied in some restricted form since the 1800s, as they have been employed to formulate the laws of electromagnetism before the unified version of Maxwell’s equations was published. In addition, early work on the Dirichlet’s problem found the IE approach proficuous, and it is well known that several problems in scattering theory (molecular, atomic and nuclear) are formulated in terms of (P)IEs. In fact, the Schrödinger equation can be recast as an IE55. Bound-state problems have also been treated with the IE formalism56.

Generalities on solving IEs

The most striking difference between the procedure of solving an IE and an ODE is that for an IE to evaluate at a single time point, one needs to know the solution for all the time points. This is clearly an issue, since solving for one point requires that we already know a solution for all the points. To better elucidate this point, we consider a simple comparison between the solution procedure of an ODE equation of type \(\dot{{\bf{y}}}=f({\bf{y}},t)\) and an IE of type \({\bf{y}}=f

Theorem 4.1

Let ϵ > 0 be fixed, and suppose that T is Lipschitz with constant k < 1. Then, we can find y ∈ X such that ∥T(y) + f − y∥ < ϵ, independent of the choice of f.

Proof

Let us set y0 ≔ f and yn+1 = f + T(yn) and consider the term ∥y1 − y0∥. We have

$$\parallel {y}_{1}-{y}_{0}\parallel =\parallel T(\;{y}_{0})\parallel.$$

For an arbitrary n > 1, we have

$$\parallel {y}_{n+1}-{y}_{n}\parallel =\parallel T(\;{y}_{n})-T(\;{y}_{n-1})\parallel \le k\parallel {y}_{n}-{y}_{n-1}\parallel .$$

Therefore, applying the same procedure to yn − yn−1 = T(yn−1) − T(yn−2) until we reach y1 − y0, we obtain the inequality

$$\parallel {y}_{n+1}-{y}_{n}\parallel \le {k}^{n}\parallel T(\;{y}_{0})\parallel .$$

Since k < 1, the term kn∥T(y0)∥ is eventually smaller than ϵ for all n ≥ ν for some choice of ν. Defining y ≔ yν for such ν gives the following:

$$\parallel T(\;{y}_{\nu })+f-{y}_{\nu }\parallel =\parallel {y}_{\nu +1}-{y}_{\nu }\parallel < \epsilon .$$

The following now follows easily.

Corollary 4.2

Consider the same hypotheses as above. Then, equation (7) admits a solution. In particular, if the integrand G in equation (8) is contractive with respect to y with constant k such that k ⋅ (b − a) < 1 (where [a, b] is the co-domain of α and β), the iterative method in Algorithm 1converges to a solution of the equation.

Proof

From the proof of Theorem 4.1, it follows that the sequence yn is a Cauchy sequence. Since X is Banach, then yn converges to y ∈ X. By continuity of T, y is a solution to equation (7). For the second part of the statement, observe that when G is contractive with respect to y, then we can apply Theorem 4.1 to show that the sequence generated following Algorithm 1is Cauchy, and we can proceed as in the first part of the proof.

Remark 4.3

Observe that the result in Corollary 4.2applies to Algorithm 2, too, under the assumptions that the transformer architecture is contractive with respect to the input sequence y. Also, a statement that refers to higher-dimensional IEs can be obtained (and proved) similar to the second part of the statement of Corollary 4.2, using the measure of Ω × [a, b] instead of the value (b − a).

In practice, the method of successive approximations is implemented as follows. The initial guess for the IE is simply given by the free function f (that is, T0(f)), which is used to initialize the iterative procedure. Then, we apply T to y0 ≔ T0(f) to obtain a new solution z1 ≔ f(t) + T1(y0). We set y1 ≔ ry0 + (1 − r)z1 and apply T2 to the solution y1 and repeat. Here r is a smoothing factor that determines the amount of contribution from the new approximation to consider at each step. As the iterations grow, the fractions of the contributions due to the smoothing factor r tend to 1. Observe that when we sum ryi + (1 − r)yi+1 with r = 0, we obtain the terms of the von Neumann series up to degree i applied to f: \(\mathop{\sum }\nolimits_{0}^{i}{T}^{\;k}(\;f\;)\). The smoothing factor generally shows good empirical regularization for IE solvers, and we have set r = 1/2 throughout our experiments, even though we have not seen any concrete difference between different values of r. This procedure is shown in Fig. 2.

In another work11, computations on the error bounds for the iterative procedure described above when the integrand function G splits into the product of a kernel (see above) and a linear function F are given. Also, a detailed description of the Nyström approximation for the computation of the error is given. We describe a concrete realization of the iterative procedure discussed above in the ‘IEs’ section, along with the learning steps for the training of our model. Moreover, we additionally observe that the procedure described above does not depend on T being an integral operator or a general operator, and therefore, applying this methodology to the case where we have a transformer instead of T is still meaningful, in the assumption that T is such that the iterated series of approximations is convergent.

Depending on the specific IE that one is solving (for example, Fredholm or Volterra, 1D or (n + 1)-dimensional), the actual numerical procedure for finding a numerical solution can vary. For instance, several studies have showcased such a wide variety of specific methods for the solution of certain types of equation35,58,59,60,61. Such variations on the same theme of iterative procedure depend on finding the most efficient way of converging to a solution, finding the best error bounds, improving stability of the solver and substantially depending on the form of the integral operator. As our method is applied without the actual knowledge of the shape of the integral operator, but it actually aims at inferring (that is, learning) the integral operator from data, we implement an iterative procedure that is fixed and depends only on a hyperparameter smoothing factor. This is described in detail in the next section. However, we point out that since the integrand, and therefore the integral operator itself, is learned during the training, one can assume that the model will optimize with respect to the procedure in a way that our iterations are in a sense ‘optimal’ with respect to the target.

Thus far, our considerations on the implementation of IE solvers seem to point to a fundamental computational issue, since they entail a more sophisticated solving procedure than that of ODEs or PDEs. However, in various situations, even solving ODEs and PDEs through IE solvers presents significant advantages that are not necessarily obvious from the above discussions. The first advantage is that IE solvers are significantly more stable than ODE and PDE solvers, as shown in other work6,7,35. This, in particular, provides a solution to the issue of underflowing during the training of NODEs that does not consist of a regularization, but of a complete change in perspective. In addition, even though one needs to iterate to solve an IE, in general, the number of iterations is not particularly high. In fact, in our experiments, the total number of iterations turned out to be sufficient to be fixed between 4 and 6. However, when solving, for instance, an ODE, one needs to sequentially go through each time step. These can be in the order of 100 (as that in some of our experiments). On the contrary, our IE solver processes the full time interval in parallel for each iteration. This results in a much faster algorithm compared with differential solvers, as shown in our experiments.

Existence and uniqueness of solutions

The solver procedure described in the previous subsection, of course, assumes that there exists a solution to start with. As mentioned at the beginning of the section, we treat equations of the second kind in this Article also because the existence conditions are better behaved than for the equations of the first kind. We now give some theoretical considerations in this regard. We will also discuss when these solutions are uniquely determined. Existence and uniqueness are two fundamental parts of the well posedness of IEs, the other being the continuity of solutions with respect to the initial data.

A concise and relatively self-contained reference for the existence and uniqueness of solutions for (linear) Fredholm IEs is provided elsewhere53. In fact, it is shown that if a Fredholm equation has a Hermitian kernel, then the IE has a unique solution whenever λ is not an eigenvalue of the integral operator. For real coefficients, which is the case we are interested in, one can simply reduce the case to symmetric kernels, which are kernels for which K(t, s) = K(s, t) for all t and s. In this Article, since we have assumed λ = 1, the condition becomes equivalent to saying that there is no function z such that \(\mathop{\int}\nolimits_{0}^{1}K(t,s)z(s)ds=z

(10)