Language models have gained attention in reinforcement learning with human feedback (RLHF), but current reward modeling methods face the challenge of accurately capturing human preferences. Traditional reward models trained as simple classifiers have difficulty performing explicit inferences on response quality, limiting their effectiveness in guiding LLM behavior. A key issue is their inability to generate inference traces, requiring all evaluations to be performed implicitly within a single forward pass. This constraint prevents models from thoroughly assessing the nuances of human preferences. Alternative methods, such as LLM-as-judge frameworks, have attempted to address this limitation, but generally perform worse than traditional reward models on pairwise preference classification tasks, highlighting the need for more effective methods.

Researchers have attempted different approaches to address the challenge of reward modeling for language models. Ranking models such as Bradley-Terry and Plackett-Luce have been employed, but they cannot accommodate non-automated preferences. Some work directly models the probability that one response is preferred over another, while other work focuses on modeling rewards across multiple objectives. Recent work has proposed maintaining and training language model heads as a form of regularization.

Critique-based feedback methods have also been explored, using self-generated critiques to improve generation quality or act as preference signals. However, these approaches are distinct from efforts to train better reward models when human preference data is available. Some researchers have investigated using oracle critiques or human-labeled critique preferences to teach language models effective critiques.

The LLM judge framework, which uses a scoring rubric to evaluate answers, has similarities to critique-based methods but focuses on evaluation rather than correction. This approach produces chain-of-thought reasoning, but generally performs worse than traditional reward models on pairwise preference classification tasks.

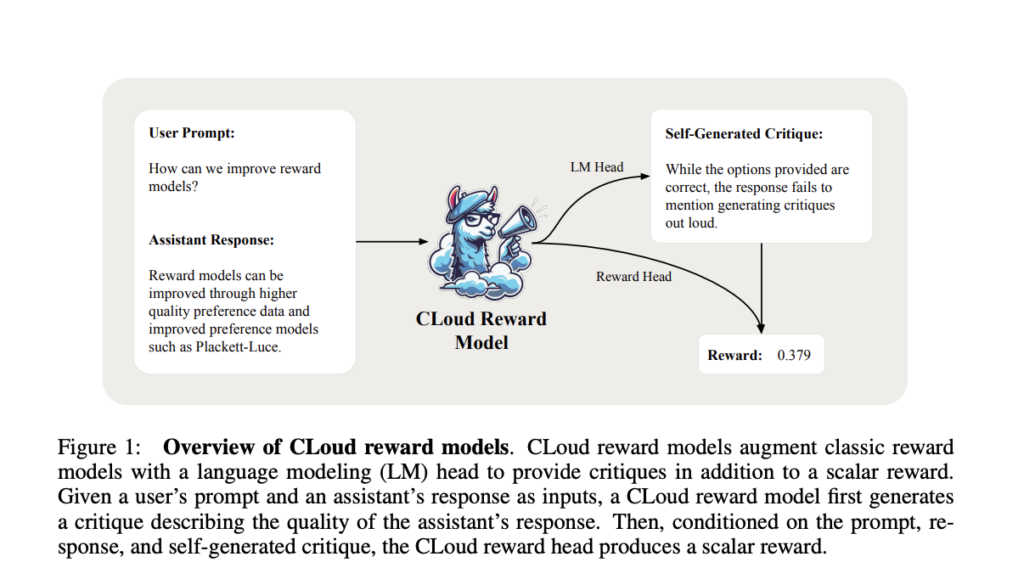

Announced by researchers from Databricks, MIT, and the University of California, San Diego Criticize out loud (CLoud) Reward models are a unique approach to improving language model performance in reinforcement learning from human feedback. These models generate detailed critiques of how well the assistant's responses answer the user's query before generating a scalar reward for the response quality. This process combines the strengths of traditional reward models and the LLM-as-a-Judge framework.

The CLoud reward model is trained using a preference dataset that contains prompts, responses, and oracle critiques. The training process involves supervised fine-tuning of oracle critiques for critique generation and a Bradley-Terry preference model for scalar reward generation. To improve performance, researchers are investigating multi-sample inference techniques, particularly self-consistency, which samples multiple critique reward predictions and marginalizes across critiques for more accurate reward estimation.

This innovative approach aims to integrate reward models with the use of LLMs as judges, and has the potential to significantly improve the accuracy and win rate of pairwise preference classification across a range of benchmarks. The researchers explore key design choices such as on-policy vs. off-policy training, as well as the benefits of self-consistency over critique, to optimize reward modeling performance.

The CLoud reward model extends traditional reward models by incorporating a language modeling head in addition to the base model and reward head. The training process involves supervised fine-tuning of oracle critiques, replacing these with self-generated critiques, and training the reward head on the self-generated critiques. This approach minimizes the distribution shift between training and inference. The model uses modified loss functions such as the Bradley-Terry model loss and the critique supervised fine-tuning loss. To improve performance, the CLoud model employs self-consistency during inference, sampling multiple critiques for a prompt-response pair and averaging the predicted rewards to obtain a final estimate.

The researchers evaluated the CLoud reward model against traditional reward models using two key metrics: pairwise preference classification accuracy and best-of-N (BoN) win rate. For pairwise preference classification, they used the RewardBench evaluation suite, which includes categories such as chat, chathard, safety, and inference. BoN win rate was evaluated using ArenaHard, an open-ended generative benchmark.

The CLoud reward model significantly outperformed traditional reward models in pairwise preference classification across all categories in RewardBench, both on the 8B and 70B model scales, which resulted in a significant improvement in the average accuracy of the CLoud model.

In the BoN evaluation of ArenaHard, the CLoud model showed Pareto improvement over traditional models, achieving similar or significantly higher win rates. In Best-of-16, CLoud improved the win rates of the 8B and 70B models by 1.84 and 0.89 percentage points, respectively. These results suggest that the CLoud reward model performs better in guiding the behavior of language models compared to traditional reward models.

In this study, Cloud Reward Modelrepresents a major advancement in preference modeling for language models. By maintaining language modeling features along with a scalar reward head, these models explicitly reason about the quality of responses through critique generation. This approach shows significant improvements over traditional reward models in pairwise preference modeling accuracy and best-of-N decoding performance. Self-consistent decoding has proven beneficial for inference tasks, especially those with short inference durations. By integrating language generation and preference modeling, the CLoud reward model establishes a new paradigm that paves the way for improving reward models through variable inference computing, laying the foundation for more advanced and effective preference modeling in language model development.

Check it out paper. All credit for this research goes to the researchers of this project. Also, don't forget to follow us. Twitter And our Telegram Channel and LinkedIn GroupsUp. If you like our work, you will love our Newsletter..

Join us! 49k+ ML Subreddits

Check out our upcoming AI webinars here

Asjad is an Intern Consultant at Marktechpost. He is pursuing a B.Tech in Mechanical Engineering from Indian Institute of Technology Kharagpur. Asjad is an avid advocate of Machine Learning and Deep Learning and is constantly exploring the application of Machine Learning in Healthcare.

🐝 Join the fastest growing AI research newsletter, read by researchers from Google + NVIDIA + Meta + Stanford + MIT + Microsoft & more…