From chatbots used in financial services and smart healthcare to conversational AI systems like Alexa, Siri, and Google Assistant, intelligent assistants have become an integral part of our daily lives in recent years. These smart systems do more than just address simple questions that seek information from users.1 It also assists in high-stakes decision-making scenarios such as surgery, collision prevention, and criminal justice, and serves as an educational tool for health persuasion and prosocial behavior.2, 3, 4.

artificial intelligence (A.I.) is a comprehensive field that aims to create machines that exhibit intelligent behavior. It includes a variety of technologies such as learning algorithms, inference engines, natural language processing, and decision-making systems.Fivewhich allows the machine to perform even basic tasks such as filtering emails.6even extremely complex things such as operating self-driving cars.7.This field contains large language model (LLM), a subtype of AI developed through extensive training on large amounts of text data.8This allows them to participate in activities based on human language, such as answering questions, composing sentences, and having conversations. One prominent example of LLM is OpenAI’s GPT (Generative Pre-trained Transformer) series. It has demonstrated great capabilities in generating human-like text, providing insights, and even writing code based on user prompts. chatbot A type of AI application designed to mimic human conversation9. These range from simple rule-based systems that respond to specific inputs to more sophisticated AI-driven programs that allow for more natural interactions and, in some cases, can leverage extensive internet data. Conversational AI further extends these capabilities by integrating additional elements such as natural language processing technology and speech recognition. This creates a fluid and intuitive interaction with users, making it a valuable tool in customer service, personal assistants, and various interactive platforms. Provides customized responses and excels at handling complex inquiries.

In addition to their rapid application, academics and experts are investigating the extent to which these intelligent systems are designed in ways that support user experiences that align with the goal of promoting diversity, equity, and inclusion (DEI). I have doubts about whether there is.10, 11. Recent studies have shown that these emerging machine learning systems perform poorly in accurately recognizing the voices of racial minorities.12. There is also a proliferation of anecdotal evidence with concerns that AI chatbots can generate cryptic perceptions and conspiracy-related responses.13.

While there has been a heated interdisciplinary debate about the fairness of AI in recent years, the root of the problem—the inequity of who is asked to express their opinions in communication systems—has been an issue throughout human history. In ancient Athens, the intuition of direct democracy, such as parliament, excluded many people (women, foreigners, slaves, etc.).14,15.The exclusion of the voices of certain social groups in communication systems continues today, whether in the world of politics or not.16social issues17or social media18. As democracy scholars have emphasized, inclusive dialogue not only consists of participants with diverse backgrounds, perspectives, and life experiences, but also allows people to express and listen to diverse perspectives. It should be a process that you can lean into and respond to.19. However, inequalities are “always present” because different languages have different socio-economic and cultural power, and languages with linguistic styles that symbolize power often dominate conversations.20.

Conversational AI using large-scale language models (LLMs) is a type of communication system. Similar to human-to-human communication, we face the challenge of ensuring fairness in human-AI interactions. What makes conversational AI (between humans and intelligent agents) different from other traditional communication systems (interpersonal communication, mass media, etc.) is that it has a powerful and profound impact on all areas of people’s lives. However, how these new communication AI systems work (algorithms, training datasets, etc.) is a black box for researchers due to industrial proprietary rights.twenty one. As scholars in Science and Technology Studies (STS) have warned, emerging technologies, when designed and deployed without input from various publics, can amplify existing social disparities (wealth, power) and discrimination. There is a possibility thats (i.e. people from different social backgrounds) engage in a democratic way22,23. Understanding how emerging communication technologies, such as conversational AI, empower or disempower different social groups extends the theory of (deliberative) democracy to new media technologies. provides theoretical significance and also provides practical implications for how these technologies can be designed and deployed to equally benefit all members of society.

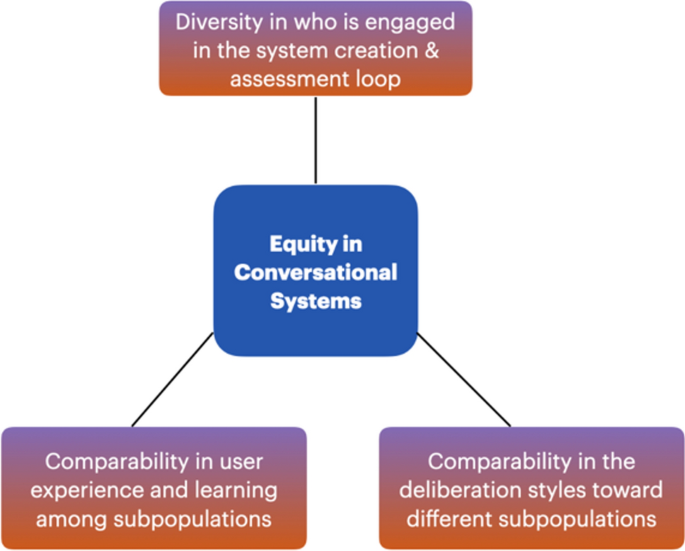

This paper proposes an analytical framework (Figure 1) for assessing the fairness of conversational AI systems, based on the scholarship of deliberative democracy and science communication. This framework is based on a general understanding of equity as the provision of resources to address an individual’s particular situation. In terms of evaluating the fairness of conversational AI systems, he highlights three criteria to consider in this evaluation process:The first criterion is Engage diverse user groups representing different social groups; within the evaluation loop. Existing research in computer science has begun to audit conversational AI systems.twenty four, most research on LLMs is primarily concerned with assessing equity in terms of gender, race, and ethnicity, i.e., how exactly LLMs handle input from people of different genders, races, and ethnicities. and have focused on how to respond. However, it is essential for research on AI fairness to go beyond these frequently emphasized characteristics. Extensive research in science and technology studies has shown that technological innovations are influenced by other socio-demographics and attitudes, such as how technology benefits or constrains people with limited language skills or formal education. It has been demonstrated that the following factors should also be considered.20and people with minority viewpoints on certain issues (e.g., climate change deniers, anti-vaxxers, racists)twenty five. Therefore, to assess the fairness of conversational AI systems, we need to look beyond gender, race, and ethnicity to include social groups, such as those with limited education or language skills, or those with minority views on a particular issue. An investigation including this will be required.

A framework for evaluating the fairness of conversational AI.

The second evaluation dimension looks at: Equity through user experience and learning— Specifically, the extent to which different users differ in their feelings about their experiences chatting with conversational AI systems, and the knowledge and positive attitude changes they gain from interactions. User experience is an important criterion widely adopted in the human-computer interaction (HCI) literature. Scholars have proposed measures of user experience, such as user satisfaction, intention to reuse the system, and intention to recommend it to others.26,27. To ensure equity, it is important to understand whether the experiences of people involved in these systems differ based on demographic and attitudinal attributes. Additionally, learning also plays an important role. Research on computer-mediated communication has investigated the extent to which users’ attitudes change after using a chatbot system on a particular topic. For example, researchers have found that chatbots designed with human-like persuasion and mental strategies can be effective in persuading users and reinforcing attitudes toward healthy and prosocial behaviors. I discovered something.28. Unbiased conversational AI systems should provide comparable user experiences to different people and foster social learning.

The third criterion examines: Fairness in conversations between humans and AI systems—Specifically, how much variation exists in discussion style and sentiment when conversational AI systems respond to different users. Many empirical studies have investigated biases in speech recognition systems, e.g.7,29 It is not a conversational system (such as a chatbot). Dialogue systems rely on these large language models to accurately understand user input and provide appropriate responses to engage in conversations that require more interaction and intelligence to meet user needs. It is more complex than speech recognition because it requires However, few studies have investigated how these LLMs respond to different social groups when discussing important social and scientific issues. As the use of Alexa/Siri systems becomes widespread, it is important to study conversations beyond voice recognition. Deliberative democracy scholarship emphasizes that democratic communication systems need to include a variety of language styles, such as the use of greeting language and the use of legitimation.30 when expressing an opinion (including citing facts or personal stories), and using rhetoric (such as appealing to emotions). In the context of human-AI communication, conversational AI systems that achieve conversational fairness need to incorporate greetings, legitimization, and emotional engagement to accommodate different social groups equally. .

To evaluate the fairness of existing conversational AI systems along these aspects, we used GPT-3, one of the most advanced conversational AI systems during the study period (late 2021) and online crowd control. We conducted an algorithmic audit that collected a large conversation dataset between Worker. OpenAI’s GPT-3 is a family of autoregressive language models capable of human-like text completion tasks. GPT-3’s algorithmic audit aims to provide empirical evidence to address his three research questions for assessing the level of fairness in conversational AI systems.

RQ1. When users interact with GPT-3 about important science and social issues (such as climate change and BLM), how do their experiences and learning outcomes differ across different social groups?

RQ2. How does GPT-3 interact with different social groups about important scientific and social issues?

RQ3. How do conversational differences correlate with user experiences and learning outcomes with GPT-3 on important science and social issues?

Our algorithmic audit study focuses on two topics that participants can converse with the GPT-3 chatbot: climate change and Black Lives Matter (BLM). The first topic represents a classic scientific issue that is controversial around the world. Research shows how public perceptions of it vary by population. In particular, there are minorities who have skeptical and negative attitudes towards the reality and anthropogenic nature of climate change, and it is often difficult to convince this group.31. Studying the topic of climate change provides a great opportunity to examine how GPT-3 responds to this group compared to the majority opinion. Additionally, you can investigate whether social learning or attitude changes occur after chatting. The second topic represents a very serious social issue that has received extensive media coverage and public attention over the past decade.32. We recognize that the word dialogue is rich in meaning, especially when it comes to interactions between humans and technology, and through technology.33. In this paper, we use the term dialogue in its broadest sense and do not assess its authenticity, vulnerability, or quality of listening.

By operationalizing our theoretical framework, this paper represents, to the best of our knowledge: First study to audit how GPT-3 converses with different social groups about important science and social issues. We built a user interface that allows crowdworkers to interact directly with his GPT-3 model. This interface integrates his GPT-3 API, allowing real-time responses to participants. To ensure diverse participants, we implemented screening questions and conducted a pilot test to gain insight into the demographic distribution of online crowdsourcing platforms.