As public concern about the ethical and social implications of artificial intelligence continues to grow, it may seem like it’s time to slow down. But the sentiment inside technology companies is quite the opposite. As the New York Times reported, as Big Tech companies’ AI competition intensifies, “worrying about things that can be fixed later is absolutely a fatal mistake right now,” a Microsoft executive said in an internal email about generative AI. is written in.

In other words, to quote Mark Zuckerberg’s old motto, it’s time to “act fast and break things.” Of course, if something breaks, you may have to repair it later, which will cost money.

In software development, the term “technical debt” refers to the implicit cost of future fixes as a result of choosing a faster, less prudent solution now. Rushing to market can mean releasing software that isn’t ready, even though you know the bugs will be known and hopefully fixed once it goes to market.

However, negative news articles about generative AI tend not to be about these kinds of bugs. Rather, many of the concerns are that AI systems are amplifying harmful biases and stereotypes, and that students are using AI in deceptive ways. We hear about privacy concerns, people falling for misinformation, labor exploitation, and worries about how quickly human jobs will be replaced, to name a few. These issues are not software defects. Recognizing that technology reinforces oppression and prejudice is very different from recognizing that a button on a website doesn’t work.

As a technology ethics educator and researcher, I’ve thought a lot about these kinds of “bugs.” It’s not just technical debt that’s happening here, it’s ethical debt. Just as technical debt can arise from testing limitations during the development process, ethical debt arises from not considering possible negative effects or social harm. And especially in the case of ethical debt, the person who owes it is rarely the one who ultimately pays it.

Off to the races

As soon as OpenAI’s ChatGPT, the starter pistol in today’s AI race, was released in November 2022, I envisioned debt ledgers starting to fill up.

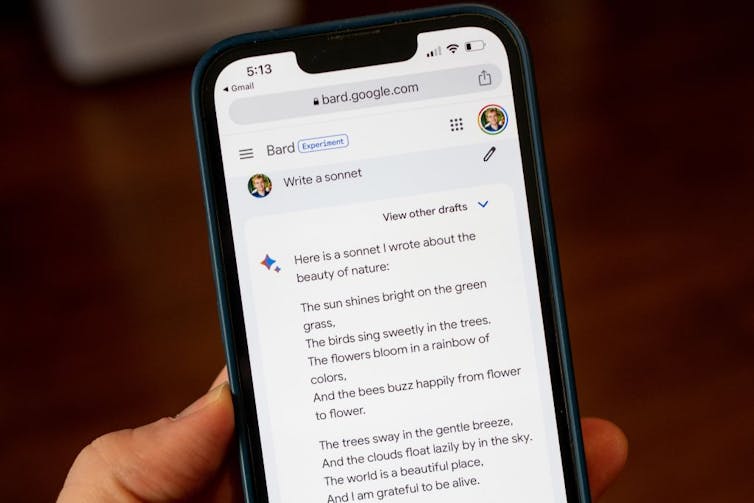

Within months, Google and Microsoft released their own generative AI programs, which appeared to be rushed to market to keep up. Google’s stock price fell after its chatbot Bard confidently provided incorrect answers during the company’s demo. One would expect Microsoft to be particularly cautious about chatbots, given Tay, the company’s Twitter-based bot that was shut down almost immediately after making misogynistic and white supremacist remarks in 2016. There may be some. However, her early AI-powered conversations with Bing have left some users uneasy, repeating known misinformation.

Smith Collection/Gado/Archive Photo (via Getty Images)

When the social debt of these hasty releases comes due, we will hear references to unintended or unforeseen consequences. After all, even with ethical guidelines in place, OpenAI, Microsoft, and Google don’t see a future. How can we know what social problems will arise before the technology is fully developed?

The root of this dilemma is uncertainty. This is a common side effect of many technological innovations, but is even greater in the case of artificial intelligence. After all, one of the important things about AI is that its behavior is not known in advance. AI may not be designed to produce negative outcomes, but it is designed to produce unexpected outcomes.

However, it is disingenuous to suggest that engineers cannot accurately infer many of these outcomes. There have been countless examples of AI reproducing bias and exacerbating social inequalities, yet these problems are rarely acknowledged publicly by the technology companies themselves. For example, outside researchers discovered racial bias in widely used commercial facial analysis systems and medical risk prediction algorithms that were applied to approximately 200 million Americans. . Scholars, advocacy groups, and research organizations like the Algorithmic Justice League and the Distributed AI Research Institute are doing much of this work of identifying harm after the fact. And this pattern is unlikely to change even as companies continue to fire ethicists.

Guess – responsibly

I sometimes describe myself as a technology optimist who thinks and prepares like a pessimist. The only way to reduce your ethical debt is to take the time to think ahead about what could go wrong, but this is not something engineers are necessarily taught to do.

Scientist and iconic science fiction writer Isaac Asimov once said that science fiction writers “foresee the inevitable, and while problems and catastrophes may be inevitable, solutions are not.” ” he said. Of course, science fiction writers are not often tasked with developing these solutions, but engineers who are currently developing AI are.

So how can AI designers think like science fiction writers? One of my current research projects focuses on developing methods to support this process of ethical reflection. Masu. I don’t mean designing with some far-future robot war in mind. I’m talking about the ability to consider future outcomes, including the immediate future.

Mascot/Getty Images

This is a topic I’ve been exploring for some time in my teaching, and I encourage my students to reflect on the ethical implications of science fiction technology in order to prepare them to do the same with the technologies they create. . One of the exercises I developed is called the “Black Mirror Writers Room,” where students speculate about the potential negative effects of technologies such as social media algorithms and self-driving cars. These arguments are often based on past patterns and possible villains.

PhD candidate Shamika Klassen and I evaluated this teaching practice in a research study that asked computing students to imagine what might happen in the future and then brainstorm ways to avoid that future in the first place. We found that there are educational benefits to encouraging this.

However, the purpose is not to prepare students for the distant future. It’s about teaching reflection as an immediately applicable skill. This skill is especially important as students imagine harm to others. Because technology-induced harms often disproportionately impact marginalized groups who are underrepresented in the computing profession. The next step in my research is to apply these ethical speculation strategies to real-world technology design teams.

Do you have time to pause?

In March 2023, an open letter with thousands of signatures advocated pausing the training of AI systems more powerful than GPT-4. If unchecked, the development of AI could “eventually outnumber us, become smarter, become obsolete, and replace us” or even cause “a loss of control over our civilization.” The authors warned that it may even be sexual.

As critics of the letter point out, the focus on hypothetical risks ignores the real harms occurring today. Nevertheless, there is a consensus among AI ethicists that the pace of AI development needs to slow down, even if developers throw up their hands and cite “unintended consequences.” I think there is almost no disagreement that this is not the case.

It’s only been a few months since the “AI race” accelerated significantly, but I think it’s already clear that ethical considerations are being left behind. But debt comes due, and history suggests that executives and investors at big tech companies may not be the ones paying it.