Stephen Wolfram wrote a very long blog post titled “What's really going on in machine learning? Some minimal models.” Does he know it?

In my opinion, no, or more strongly, you haven't even gotten off the starting line yet. Nonetheless, the approach is interesting and well worth a read.

The point is, we already know why neural networks (what he calls “machine learning”) work. Neural networks combine a simple principle of optimization with Ashby's law of necessary variety. This law basically says that for a system to be successful it must be as variable or complex as its environment. It's an old law from early cybernetics, but whatever happened to that particular attempt? Extending this a bit, it says that if a system has a certain number of degrees of freedom, any system that wants to control, mimic, or work against it needs to have the same number or more degrees of freedom. The optimization principle, roughly speaking, says that if you keep moving those degrees of freedom in the “better” direction, eventually you'll reach an acceptable state.

Deep neural networks are systems with a huge number of degrees of freedom. So many degrees of freedom, in fact, that they rival the degrees of freedom inherent in the real world, or at least a constrained subset of it. Optimization is done in the form of backpropagation, which is just a slightly more general application of gradient descent. Essentially, this works by taking the derivative of the system, which means finding ways to tweak the parameters to reduce the error, and then moving things in that direction. Gradient descent is just the math formula: “If you keep going downhill, there's a very good chance you'll hit bottom.”

These are such powerful and simple ideas that one can quickly come to the conclusion that if you can implement something as a sufficiently complex, differentiable system, you can train it to do almost anything. For example, if you can change a discrete, non-differentiable programming technique to one that is smooth and differentiable, you can learn to program using gradient descent. See DeepCoder Learns How to Write Programs.

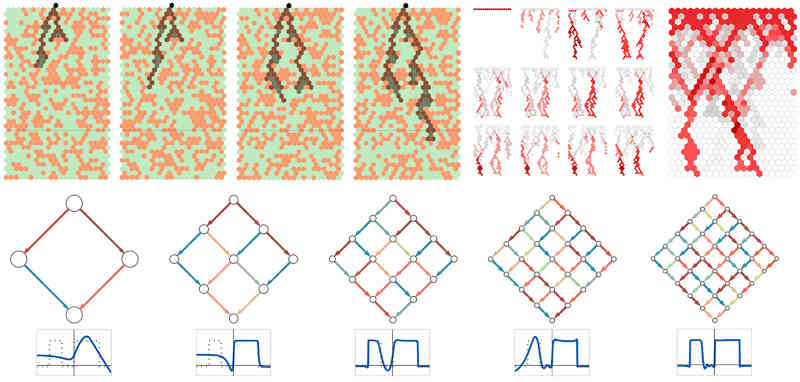

Wolfram's latest blog explains how cellular automata can be used to learn functions. Before diving into that, they explain how standard neural networks learn by reducing the model to something simple enough that their evolution can be tracked. This is interesting, but it doesn't reveal what we all really want to know: does the network capture the “essence” of what it learns, or does it just memorize it without reducing it to something simpler?

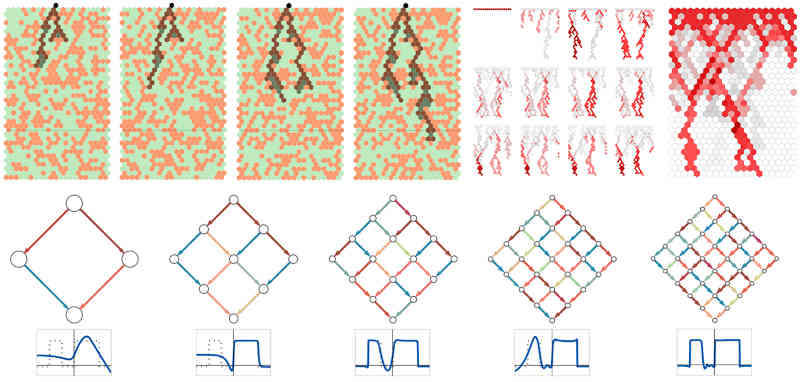

The really interesting part of this blog, although it doesn't really answer the question posed at the beginning, is where the neural network is replaced by a 1D cellular automaton. In this case, learning is done using a modified genetic algorithm. This algorithm is a discrete version of the optimization principle that works without gradients, since the concept of “derivatives” does not exist in discrete systems. Instead, the algorithm uses a random search of the response surface.

This is like going down a hill and trying each direction to see which one is “down” and then moving in that direction. You find the gradient by exploring the surrounding area.

The next idea is to use a cellular automaton restricted to Boolean rules and modify these rules to achieve different behaviors. By selecting a set of rules and applying them in sequence, we can induce learning – a kind of program. Then we train them using a genetic algorithm to arrive at a final result. Guess what? It works!

Again, optimization principles and Ashby's work come together here. Am I any closer to understanding why neural networks are so effective? Not really, but it's fun.

We can also think of the “new” cellular automaton network as an example of a Kolmogorov-Arnold network (KAN). In a standard neural network, there is a fixed set of functions implemented by every neuron, and learning occurs by adjusting the weights of each function. The fact that such a network can learn to reproduce any function is a consequence of universal approximation theory, which says that even very simple functions can be adjusted to approximate more general functions, provided that the appropriate network functions are used. The Kolmogorov-Arnold representation theory does something similar, but in this case we use a different function for each neuron. If we think of rules as the equivalent of functions, i.e. Boolean functions, we end up with a KAN network.

The blog then tries to relate all of this to the principle of universal computation, which roughly means that almost any non-trivial system is very likely Turing complete, i.e. it can compute anything. This is all true, and when you combine the principle of universal computation with the principle of optimization and Ashby's Law, things start to make sense. While we're not close to understanding neural networks yet, the blog post is fun and thought-provoking to read. However, don't assume that everything you read is new and unrelated to other research.

The real problem with neural networks is not how they learn; this is easy to understand and even has a pretty good mathematical foundation. What is interesting is how deep neural networks represent the world they are trained on. Are real-world clusters, groups, etc. reflected in the structures of the trained networks? And do these structures allow for generalization? Answer these questions and you'll be well on your way.

More Information

What actually happens in machine learning? Some minimal models

Related articles

Neurons are smarter than we thought

Neurons are a two-layer network

Geoffrey Hinton receives Royal Society Premier's Medal

Is evolution better than backpropagation?

Why are deep networks better?

Hinton, LeCun and Bengio receive 2018 Turing Award

Deep Learning Wins

McCulloch Pittsneuron

DeepCoder teaches you how to write programs.

Automatic Generation of Regular Expressions Using Genetic Programming

To find out about new I Programmer articles, sign up for our weekly newsletter, subscribe to our RSS feed, and follow us. Twitter, Facebook or Linkedin.

comment

Or email your comments to comments@i-programmer.info.