The Methods section is organised as follows: experimental methods includes the fabrication of samples, measurement of FMR response and the details of implementing reservoir computing and interconnecting arrays. Following this we discuss the tasks chosen and how they are evaluated in Task selection. Learning algorithms then provides a detailed description of the learning algorithms used in this work.

Experimental methods

Nanofabrication

Artificial spin reservoirs are fabricated via the electron-beam lithography liftoff method on a Raith eLine system with PMMA resist. 25 nm Ni81Fe19 (permalloy) is thermally evaporated and capped with 5 nm Al2O3. For WM, a staircase subset of bars are increased in width to reduce its coercive field relative to the thin subset, allowing independent subset reversal via global field. For PW, a variation in widths are fabricated across the sample by varying the electron-beam lithography dose. Within a 100 μm × 100 μm write-field, the bar dimensions remain constant. The flip-chip FMR measurements require mm-scale nanostructure arrays. Each sample has dimensions of roughly ~3 × 2 mm2. As such, the distribution of nanofabrication imperfections termed quenched disorder is of greater magnitude here than typically observed in studies on smaller artificial spin systems, typically employing 10–100 micron-scale arrays. The chief consequence of this is that the Gaussian spread of coercive fields is over a few mT for each bar subset. Smaller artificial spin reservoir arrays have narrower coercive field distributions, with the only consequence being that optimal applied field ranges for reservoir computation input will be scaled across a corresponding narrower field range, not an issue for typical 0.1 mT or better field resolution of modern magnet systems.

Magnetic force microscopy measurement

Magnetic force micrographs are produced on a Dimension 3100 using commercially available normal-moment MFM tips.

Ferromagnetic resonance measurement

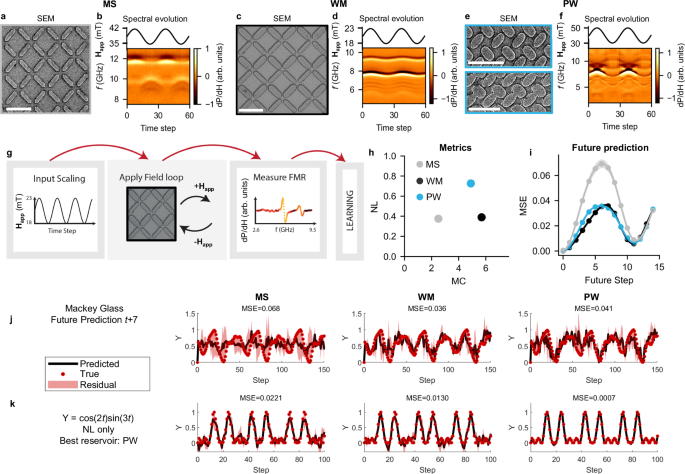

Ferromagnetic resonance spectra are measured using a NanOsc Instruments cryoFMR in a Quantum Design Physical Properties Measurement System. Broadband FMR measurements are carried out on large area samples (~3 × 2 mm2) mounted flip-chip style on a coplanar waveguide. The waveguide is connected to a microwave generator, coupling RF magnetic fields to the sample. The output from waveguide is rectified using an RF-diode detector. Measurements are done in fixed in-plane field while the RF frequency is swept in 20 MHz steps. The DC field are then modulated at 490 Hz with a 0.48 mT RMS field and the diode voltage response measured via lock-in. The experimental spectra show the derivative output of the microwave signal as a function of field and frequency. The normalised differential spectra are displayed as false-colour images with symmetric log colour scale.

Data input and readout

Reservoir computing schemes consist of three layers: an input layer, a hidden reservoir layer and an output layer corresponding to globally applied fields, the nanomagnetic reservoir and the FMR response, respectively. For all tasks, the inputs are linearly mapped to a field range spanning 35–42 mT for MS, 18–23.5 mT for WM and 30–50 mT for PW, with the mapped field value corresponding to the maximum field of a minor loop applied to the system. In other words, for a single data point, we apply a field at +Happ then −Happ. After each minor loop, the FMR response is measured at the applied field −Happ between 8 and 12.5 GHz, 5 and 10.5 GHz, and 5–10.5 GHz in 20 MHz steps for MS, WM, and PW, respectively. The FMR output is smoothed in frequency by applying a low-pass filter to reduce noise. Eliminating noise improves computational performance5. For each input data-point of the external signal s(t), we measure ≈300 distinct frequency channels and take each channel as an output. This process is repeated for the entire dataset with training and prediction performed offline.

Interconnecting arrays

When interconnecting arrays, we first input the original Mackey-Glass or sinusoidal input into the first array via the input and readout method previously described. We then analyse the memory and NL of each individual frequency output channel (described later). A particular frequency channel of interest is converted to an appropriate field range. The resulting field sequence is then applied to the next array via the computing scheme previously described. This process is then repeated for the next array in the network. The outputs from every network layer are concatenated for learning.

Task selection

Throughout this manuscript, we focus on temporally driven regression tasks that require memory and NL. Considering a sequence of T inputs \(\left[{\mathbf{s}}(1),\, {\mathbf{s}}(2),\, \ldots,\, \mathbf{s}({{\rm{T}}})\right]\), the physical system response is a series of observations \(\left[{\mathbf{o}}(1),{\mathbf{o}}(2),\ldots,\mathbf{o}({{\rm{T}}})\right]\) across time. These observations can be gathered from a single reservoir configuration, as in Fig. 1, or can be a collection of activities from multiple reservoirs, in parallel or interconnected, as in Fig. 2. In other words, the response of the system o(t) at time t is the concatenation of the outputs of the different reservoirs used in the architecture considered. The tasks faced can be divided into five categories:

Sine transformation tasks

The system is driven by a sinusoidal periodic input \(s

Algorithm 1

Hyperparameter selection

for each split in outer loop i do

for each split in inner loop j do

for each θ ∈ Θ, λ ∈ Λ do

Find optimal weights: \({\mathbf{W}}_{o}^{*}={{\arg }\,{\min }}_{{\mathbf{W}}_{o}} {{\rm{E}}}\left\{{{\rm{Tr}}}_{{\rm{ij}}} {|} {{\mathbf{W}}}_{o} ,\, \theta,\, \lambda\right\}\)

Compute error on the test set \({{\rm{E}}}\{{{{\mathcal{T}}}}_{{\rm{ij}}}^{\prime}| {{{\mathbf{W}}}}_{o}^{*},\theta,\lambda \}\)

end for

Select optimal hyperparameters as \(\theta_{i}^{*},\, \lambda_{i} ^{*}={{\arg }\,{\min }}_{\theta \in {\Theta},\ \lambda \in {\Lambda}} {\sum}_{j=1}^{10} {{\rm{E}}}\{ {{\mathcal{T}}}_{{\rm{ij}}}^{\prime}|{{\mathbf{W}}}_{o} ^{*},\, \theta,\, \lambda \}\)

end for

Find the corresponding boolean vector \({\theta }_{i}^{ * }\to {{{\bf{f}}}}^{(i)}\)

end for

Algorithm 2

Evolutionary Algorithm

for for each split in outer loop i do

Initialise parents \({{{\bf{F}}}}_{p}=\left\{{{\bf{f}}}(n)\right\}={{{\bf{f}}}}^{(i)}\)

repeat

Apply crossover and mutations to generate children Fc

for each split in inner loop j do

for each f ∈ Fc do

Find optimal weights: \({{\mathbf{W}}}_{o} ^{*}={{\arg }\,{\min }}_{{{\mathbf{W}}}_o } {{\rm{E}}}\left\{ {{\rm{Tr}}}_{{\rm{ij}}}|{{\mathbf{W}}}_{o},\, {\textbf{f}},\, \lambda^{ * }_{i}\right\}\)

end for

end for

Select new Fp as the \({{\bf{f}}}^{\prime} \) with minimal \({\sum }_{j=1}^{10}{{\rm{E}}}\left\{{{{\mathcal{T}}}}_{{\rm{ij}}}^{\prime}| {{{\mathbf{W}}}}_{o} ^{*},\, {{\bf{f}}},\, {\lambda }_{i}^{ * }\right\}\)

until best performance on \({{{\mathcal{V}}}}_{i}\)

end for

We now describe the feature selection methodology in detail. Considering the response of a system across time o(t), the feature selection algorithm aims to select a subset of features x(t) that will be used for training and evaluation. We can consider feature selection as a boolean operation over the o(t) feature space, where a value of one (zero) corresponds to the considered feature being used (neglected). If m is the dimensionality of o(t), the number of possible ways to define x(t) is 2m−1. As a consequence, the feature selection algorithm can also lead to overfitting. Therefore, we must implement an additional cross-validation step to ensure the performance of the selected feature is general across the entire dataset.

Considering a specific split of the outer validation loop (Fig. 6), where we select a validation set \({{{\mathcal{V}}}}_{i}\) and a test set \({{{\mathcal{T}}}}_{i}\) (comprising of the 10% of the data each, i.e., 25 data points), we perform 10 cross-validations on the remaining data to optimise hyperparameter values through grid-search. In this inner validation loop, each split corresponds to a test set \({{{\mathcal{T}}}}_{ij}^{{\prime} }\) (comprising again of the 10% of the remaining data, without \({{{\mathcal{V}}}}_{i}\) and \({{{\mathcal{T}}}}_{i}\)), where changing j means to select a different test-split in the inner loop based on the i-th original split of the outer validation (Fig. 6). The remaining data, highlighted in green in Fig. 6, are used for training to optimise the readout weights and minimise the error function E through ridge-regression as previously described.

At this stage, we perform a grid-search methodology on hyperparameters θ and λ which control directly and indirectly the number of features being adopted for training (Algorithm 1). The hyperparameter θ acts as a threshold on the correlation matrix of the features. Simply, if the correlation among two features exceeds the specific value of θ considered, one of these two features is removed for training (and testing). The idea behind this method is to discard features that are highly correlated, since they would contribute in a similar way to the output. This emphasises diversity in the reservoir response. The hyperparameter λ is the penalty term in ridge-regression. Higher values of λ lead to a stronger penalisation on the magnitude of the readout weights. As such, λ can help prevent overfitting and controls indirectly the number of features being adopted. We should use a high value of λ if the model is more prone to overfitting the training dataset, a case that occurs when the number of features adopted is high. Calling \({{\rm{E}}}\left\{{{{\mathcal{T}}}}_{ij}^{{\prime}}| {{{\mathbf{W}}}}_{o} ^{*},\theta,\lambda \right\}\) the error computed on the test set \({{{\mathcal{T}}}}_{ij}^{{\prime} }\) with weights \({{{\bf{W}}}}_{o} ^{*}\) optimised on the corresponding training data (\({\mathbf{W}}_{o} ^{*}={{\arg }\,{\min}}_{{\mathbf{W}}_{o}} {\rm{E}} \left\{ {\rm{Tr}}_{ij}|{\mathbf{W}}_{o},\theta,\lambda \right\}\) in Algorithm 1 and with hyperparameter values θ and λ, respectively, we select the values of the hyperparameters that correspond to the minimum average error over the test sets in the inner validation loop. Otherwise stated, we select the optimal \({\theta }_{i} ^{ \ast}\) and \({\lambda }_{i} ^{\ast}\) for the i-th split in the outer loop from the test average error in the inner 10 cross-validation as \(\theta_{i}^{*},\lambda_{i}^{*}={{\arg }\,{\min }}_{\theta \in {{\Theta}},\ \lambda \in {{\Lambda}}} {\sum}_{j=1}^{10} {{\rm{E}}}\left\{ {{\mathcal{T}}}_{ij}’|{{\mathbf{W}}}_{o} ^{*},\theta,\lambda \right\}\). This methodology permits to find \({\theta }_{i}^{*}\) and \({\lambda }_{i}^{*}\) that are not strongly dependent on the split considered, while maintaining the parts of the dataset \({{{\mathcal{V}}}}_{i}\) and \({{{\mathcal{T}}}}_{i}\) unused during training and hyperparameter selection. The sets Θ and Λ correspond to the values explored in the grid-search. In our case, Θ = {1, 0.999, 0.99, 0.98, 0.97, …} and Λ = {1e − 4, 1e − 3, 1e − 2, 5e − 2, 1e − 1}. Repeating this procedure for each split of the outer loop, we found the optimal \({\theta }_{i}^{*}\) and \({\lambda }_{i}^{*}\), for i = 1, …, 10. This concludes the hyperparameter selection algorithm described in Algorithm 1. Selection of the hyperparameters \({\theta }_{i}^{*}\) permit to find subsets of features based on correlation measures. However, promoting diversity of reservoir measures does not necessarily correspond to the highest performance achievable. Thus, we adopted an evolutionary algorithm to better explore the space of possible combination of measurements (Algorithm 2).

It is necessary now to notice how a value of \({\theta }_{i}^{\ast}\) corresponds to a m-dimensional boolean vector f (i), whose j-th dimension is zero if its j-th feature \({f}_{j}^{(i)}\) is correlated more than \({\theta }_{i}^{*}\) with at least one other output. For each split i in the outer loop, we adopted an evolutionary algorithm that operates over the m-dimensional boolean space of feature-selection, where each individual corresponds to a specific vector f. At each evolutionary step, we perform operations of crossover and mutation over a set of Np parents \({{\mathbf{F}}}_p={ \big\{ {{\mathbf{f}}}(n) \big\}}_{n=1,{\ldots},{{\rm{N}}}_p}\). For each split i of the outer loop and at the first evolutionary step, we initialised the parents of the algorithm to f(i). We defined a crossover operation among two individuals f(i) and f(j) as \({{\bf{f}}}={{\rm{CrossOver}}}\big({{\bf{f}}}(i),{{\bf{f}}}(j)\big)\) where the k-th dimension of the new vector f is randomly equal to \({f}_{k}^{(i)}\) or \({f}_{k}^{(\;j)}\) with the same probability. A mutation operation of a specific f(i) is defined as \({{\bf{f}}}={{\rm{Mutation}}}({{\bf{f}}}(i))\) by simply applying the operator not to each dimension of f(i) with a predefined probability pm. The application of crossovers and mutations permits the definition of a set of Nc children \({\mathbf{F}}_{c}=\left\{ {\mathbf{f}}(n) \right\}_{n=1,\ldots,{\rm{N}}_{c}}\) from which we select the Np models with the highest performance over the test sets of the inner loop as parents for the next iteration. Otherwise stated, we selected the Fp vectors corresponding to the lowest values of the average error as \({{\mathbf{F}}}_p={{{\arg}\, {\min}}_{ {\mathbf{f}} \in {\mathbf{F}}_c }^{\rm{Np}}}{\sum}_{j=1}^{10} {{\rm{E}}}\{ {{\mathcal{T}}}_{ij}^{\prime}|{{\mathbf{W}}}_{o} ^{*},{{\mathbf{f}}},\lambda_i^{*}\}\) where \({\arg\min}^{{\rm{Np}}}\) selects the Np arguments of the corresponding function with minimal values. We notice how a step of the evolutionary approach aims to minimise an error estimated in the same fashion as in the algorithm of Algorithm 1, but this time searching for the best performing set Fp, rather then the best performing couple of hyperparameter values \({\lambda }_{i}^{ * }\) and \({\theta }_{i}^{ * }\).

Finally, we stop the evolutionary algorithm at the iteration instance where the average performance of Fp over \({{{\mathcal{V}}}}_{i}\) is at minimum and selected the model \({{{\bf{f}}}}_{i} ^{*}\) with the lowest error on \({{{\mathcal{V}}}}_{i}\). The utilisation of a separate set \({{{\mathcal{V}}}}_{i}\) for the stop-learning condition is necessary to avoid overfitting of the training data. Indeed, it is possible to notice how the performance on \({{{\mathcal{V}}}}_{i}\) would improve for the first iterations of the evolutionary algorithm and then become worse. This concludes the evolutionary algorithm in Algorithm 2. At last, the overall performance of the model is computed as the sum of the mean-squared errors over the outer validation loop as \({{\mathcal{E}}}={\sum }_{i=1}^{10}{{\rm{E}}}\{{{{\mathcal{T}}}}_{i}| {{{\mathbf{W}}}}_{o} ^{*},{{{\bf{f}}}}_{i} ^{*},{\lambda }_{i} ^{*}\}\). Summarising, we can think the overall methodology as an optimisation of relevant hyperparameters followed by a fine-tuning of the set of features used through an evolutionary algorithm. The final performance and its measure of variation reported in the paper are computed as average and standard deviation over ten repetitions of the evolutionary algorithm, respectively.

Evaluating overparameterisation

To explore the effects of overparameterisation it is necessary to vary the number of network parameters (i.e., number of FMR channels). This is achieved as follows: first, a set of tasks is prepared for which the MSE will be evaluated. Here, we analyse performance when predicting future values of the Mackey-Glass equation. We average MSE when predicting t + 1, t + 3, t + 5, t + 7, t + 9 and t + 11. Next, we vary the number of parameters. To do so, we randomly shuffle a sequence of integers from 0 to N, where N is the total number of output channels for a given network e.g., N = (328, 34, 273…). From this, we select the first P points of the sequence, giving a list of indexes referring to which channels to include in that sequence. For those channels, we perform a 5 cross-validation of different train and test splits and use linear regression to evaluate the train and test MSE for a given task and set of outputs. P is increased in steps of N/500 for single, parallel, and series networks and steps of N/5000 for the PNN. For a given task, we repeat this process for 50 random shuffles to ensure we are sampling a broad range of output combinations for each network. The displayed MSEs are the average and standard error of these 50 trials over all tasks.

Meta-learning

Algorithm 3

Meta-learning through MAML

Sample a batch of tasks from \(p({{\mathcal{T}}})\)

for each task i do

Sample K datapoints of device responses \({{{\mathcal{D}}}}_{i}=\left\{\ldots,\, (\tilde{\mathbf{y}}({t}_{k}),\,{\mathbf{o}}({\rm{t}}_{\mathbf{k}})),\ldots \right\}\), k = 1, …, K

for number of inner loop steps \(n=1,\ldots,\, \tilde{n}\) do

y(tk) = f(o(tk)∣αi(n), Wo)

\({{{\boldsymbol{\alpha }}}}_{i}(n+1)={{\boldsymbol{\alpha}}_{i} (n) }-{\eta }_{1}{\boldsymbol{\nabla} }_{{{{\boldsymbol{\alpha }}}}_{i}(n)}{{{\rm{E}}}}_{{{{\mathcal{T}}}}_{i}}\left({{{\mathcal{D}}}}_{i}| {{{\boldsymbol{\alpha }}}}_{i}(n),\, {{{\bf{W}}}}_{o}\right)\)

Sample Q datapoints of devices response \({{\mathcal{D}}}^{{\prime} }_{i}=\{\ldots,\, (\tilde{\mathbf{y}}({t}_{q}),{\mathbf{o}}({\rm{t}}_{\mathbf{q}})),\ldots \}\), q = 1, …, Q for the meta-update

end for

Perform the meta-update

\({{{\bf{W}}}}_{o}\leftarrow {{{\bf{W}}}}_{o}-{\eta }_{2}{\boldsymbol{\nabla} }_{{{{\bf{W}}}}_{o}}\mathop{\sum}_{i}{{{\rm{E}}}}_{{{{\mathcal{T}}}}_{i}}\left({{\mathcal{D}}}^{{\prime} }_{i}| {{{\boldsymbol{\alpha }}}}_{i}(\tilde{n}),\, {{{\bf{W}}}}_{o}\right)\)

\({{\boldsymbol{\alpha }}}(0)\leftarrow {{\boldsymbol{\alpha }}}(0)-{\eta }_{2}{\boldsymbol{\nabla}}_{{{\boldsymbol{\alpha }}}(0)}{\sum}_{i}{{{\rm{E}}}}_{{{{\mathcal{T}}}}_{i}}\left({{\mathcal{D}}}^{{\prime} }_{i}| {{{\boldsymbol{\alpha }}}}_{i}(\tilde{n}),\, {{{\bf{W}}}}_{o}\right)\)

end for

The goal of meta-learning is optimise an initial state for the network such that when a new task with limited data points is presented, the network can be quickly updated to give strong performance. A schematic of the meta-learning algorithm is presented in Fig. 7 and pseudo-code shown in Algorithm 3.

The input signal is applied to the multilayer neural network, and the observations are recorded. The system is then trained to learn a subset of frequency decomposition with minimal training data.

Let us consider a family of M tasks \({{\mathcal{T}}}=\{{{{\mathcal{T}}}}_{1},{{{\mathcal{T}}}}_{2},\ldots,{{{\mathcal{T}}}}_{{{\rm{M}}}}\}\). Each task is composed of a dataset and a cost function \({{{\rm{E}}}}_{{{{\mathcal{T}}}}_{i}}\). A meta-learning algorithm is trained on a subset of \({{\mathcal{T}}}\) and asked to quickly adapt and generalise on a new test subset of \({{\mathcal{T}}}\). Otherwise stated, the aim of meta-learning is to find an initial set of parameters W(0) that permits learning of an unseen task \({{{\mathcal{T}}}}_{i}\) by updating the model over a small number of data points from \({{{\mathcal{T}}}}_{i}\). To achieve this, we use a variation of the MAML algorithm, which is now summarised. For a given task \({{{\mathcal{T}}}}_{i}\), the initial set of trainable parameters W(0) are updated via gradient descent over a batch of data points \({{{\mathcal{D}}}}_{i}=\{\ldots,({{{\bf{o}}}}_{j},{\tilde{{{\bf{y}}}}}_{j}),\ldots \}\), where oj and \({\tilde{{{\bf{y}}}}}_{j}\) are the inputs and targets for the j-th data point respectively

$${{{\bf{W}}}}_{i}(n)={{{\bf{W}}}}_{i}(n-1)-{\eta }_{1}{\boldsymbol{\nabla} }_{{{{\bf{W}}}}_{i}(n-1)}{{{\rm{E}}}}_{{{{\mathcal{T}}}}_{i}}\left({{{\mathcal{D}}}}_{i}| {{{\bf{W}}}}_{i}(n-1)\right)$$

(1)

where η1 is the learning rate adopted. Eq.(1) is repeated iteratively for \(n=0,\ldots,\tilde{n}\), where \(\tilde{n}\) are the number of updates performed in each task. We notice how the subscripts i on the parameters W are introduced because the latter become task-specific after updating, while they all start from the same values W(0) at the beginning of a task. MAML optimises the parameters W(0) (i.e., the parameters used at the start of a task, before any gradient descent) through the minimisation of cost functions sampled from \({{\mathcal{T}}}\) and computed over the updated parameters \({{\bf{W}}}(\tilde{n})\) i.e., the performance of a set of W(0) is evaluated based on the resulting \({{\bf{W}}}(\tilde{n})\) after gradient descent. Mathematically, the aim is to find the optimal W(0) that minimises the meta-learning objective \({{\mathcal{E}}}\)

$${{\mathcal{E}}}=\sum\limits_{{{\mathcal{T}}}}{{{\rm{E}}}}_{{{{\mathcal{T}}}}_{i}}({{\mathcal{D}}}^{{\prime} }_{i}| {{{\bf{W}}}}_{i}(\tilde{n}))$$

(2)

$${{\bf{W}}}(0)\leftarrow {{\bf{W}}}(0)-{\eta }_{2}{{{\boldsymbol{\nabla }}}}_{{{\bf{W}}}(0)}\sum\limits_{i}{{{\rm{E}}}}_{{{{\mathcal{T}}}}_{i}}\left({{\mathcal{D}}}^{{\prime} }_{i}| {{{\bf{W}}}}_{i}(\tilde{n})\right)$$

(3)

where the \({{\mathrm{apex}}^{\prime}}\) is adopted to differentiate the data points used for the meta-update from the inner loop of Eq. (1), and η2 is the learning rate for the meta-update. Gradients of \({{\mathcal{E}}}\) need to be computed with respect to W(0), and this results in the optimisation of the recursive Eq.(1) and the computation of higher-order derivatives. In our case, we use the first-order approximation of the algorithm31. After the meta-learning process, the system is asked to learn an unseen task \({{{\mathcal{T}}}}_{j}\) updating W(0) through the iteration of Eq.(1) computed on a small subset of data of \({{{\mathcal{D}}}}_{j}\).

In contrast to previous works, we adopted this learning framework on the response of a physical system. The optimisation occurs exclusively at the readout level, leaving the computation of non-linear transformations and temporal dependencies to the physical network. The outcome of our application of meta-learning, depends on the richness of the dynamics of the nanomagnetic arrays. In this case, the readout connectivity matrix is not task-specific as in previous sections, we use the same W(0) for different tasks.

We decompose the parameters of the system W into two sets of parameters {Wo, α} to exploit few-shot learning on the readout from the PNN. The output of the system is defined as y(t) = f(o(t)∣α, Wo), where the function \(f\) is linear and follows \(f({{\bf{o}}}

(4)

while the parameters Wo are optimised through the meta-learning objective via

$$\begin{array}{r}{{{\bf{W}}}}_{o}\leftarrow {{{\bf{W}}}}_{o}-{\eta }_{2} \sum\limits_{j}{{{\rm{E}}}}_{{{{\mathcal{T}}}}_{j}}({{{\mathcal{D}}}}_{j}| {\boldsymbol{\alpha} }_{j}(\tilde{n}))\end{array}$$

(5)

In this way, training of the parameters Wo is accomplished after appropriate, task-dependent scaling of the output activities. A pseudo-code of the algorithm is reported in Fig. 7.

Echo state network comparison

The Echo-state network of Fig. 2g–i is a software model defined through

$$\begin{array}{r}{{\bf{x}}}(t+\delta t)=(1-\delta t/ \tau ){{\bf{x}}}

(6)

where x is the reservoir state, s is the input, Wesn and Win are fixed and random connectivity matrices defined following standard methodologies63 and τ is a scaling factor. In particular, the eigenvalues of the associated, linearised dynamical system are rescaled to be inside the unit circle of the imaginary plane. Training occurs on the readout level of the system. Echo-state networks and their spiking analogous liquid-state machines64 are the theoretical prototypes of the reservoir computing paradigm. Their performance can thus constitute an informative reference for the physically defined networks. We highlight two differences when making this comparison: first, the ESN is defined in simulations and it is consequently not affected by noise; second, the physically defined network has a feed-forward topology, where the memory of the system lies in the intrinsic dynamics of each complex node and the connectivity is not random but tuned thanks to the designed methodology.

For the results of Fig. 2, we evaluate the performance of a 100-node ESN. For each value of the dimensionality explored, we repeated the optimisation process ten times resampling the random Win and Wesn. The black dots in Fig. 2 report the average performance across these repetitions as the number of nodes varies. The black line reflects a polynomial fit of such results for illustrative purposes, while the grey area reflects the dispersion of the distributions of the results.

Multilayer perceptron comparison

The multilayer perceptron (MLP) comparison in the supplementary information is defined as follows. We have an MLP with four layers: an input layer, two hidden layers interconnected with a ReLu activation function and an output layer. We vary the size of the hidden layers from 1 to 500. Standard MLP’s do not have any recurrent connections and therefore do not hold information about previous states, hence predictive performance is poor. We add false memory by providing the network with the previous Tseq data points as input i.e., \(\tilde{{{\mathbf{s}}}}

Source link