Hugging Face has recently made a major contribution to cloud computing. Hugging Face Deep Learning Container for Google CloudThis development marks a major step forward for developers and researchers who want to leverage cutting-edge machine learning models more easily and efficiently.

Streamlined Machine Learning Workflow

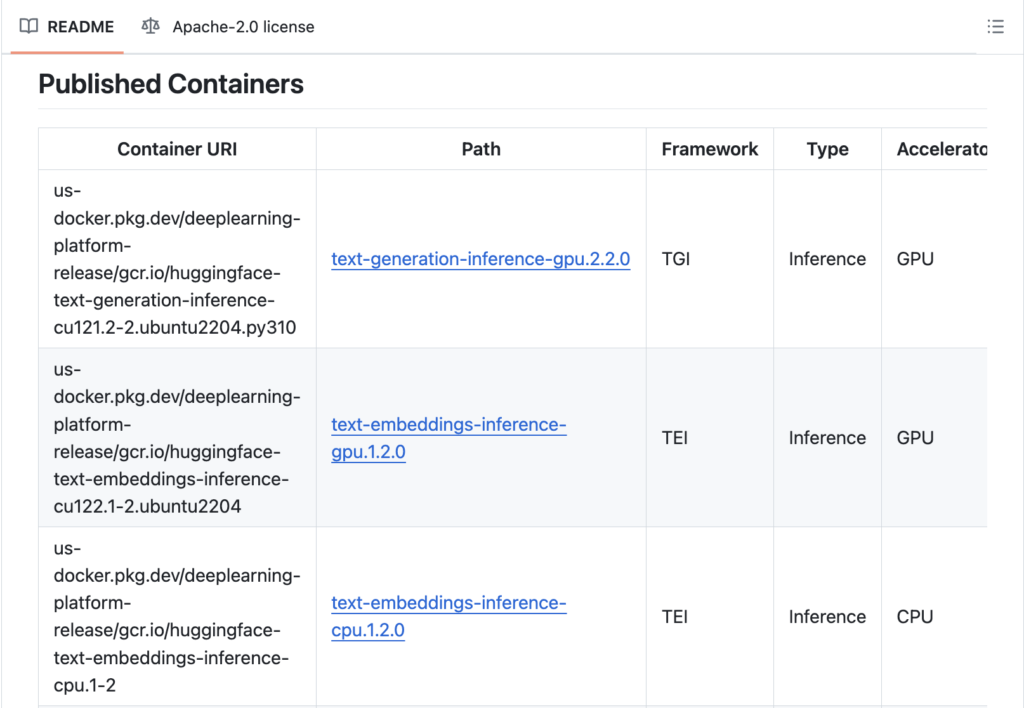

Hugging Face Deep Learning Containers are pre-configured environments designed to simplify and accelerate the process of deploying and training machine learning models on Google Cloud. These containers include the latest versions of popular ML libraries, including TensorFlow, PyTorch, and the Hugging Face “transformers” library. Using these containers, developers can avoid the complex and time-consuming task of setting up and configuring environments, allowing them to focus on developing and experimenting with their models.

One of the key benefits of these containers is their seamless integration with the Google Cloud ecosystem, allowing users to easily deploy models to Google Kubernetes Engine (GKE), Vertex AI, and other cloud-based infrastructure services offered by Google. This integration gives developers access to scalable, high-performance computing resources, enabling them to run large-scale experiments and deploy models in production with minimal effort.

Performance optimization

Performance optimization is another key feature of the Hugging Face Deep Learning Containers. These containers are designed to take full advantage of Google Cloud's underlying hardware, including GPUs and TPUs. This is useful for computationally intensive tasks such as training deep learning models and fine-tuning pre-trained models on large datasets.

In addition to hardware optimizations, the container also includes several software-level improvements. For example, it comes pre-installed with an optimized version of Hugging Face's “Transformer” library, providing models fine-tuned for specific tasks such as text classification, summarization, and translation. These optimized models significantly reduce the time required for training and inference, enabling developers to achieve results more quickly and iterate on their projects more quickly.

Enhanced collaboration and reproducibility

Collaboration and reproducibility are key aspects of machine learning projects, especially in research and development environments. Hugging Face Deep Learning Containers are designed with these needs in mind. By providing a consistent, reproducible environment across different stages of a project, from development to deployment, these containers ensure results are consistent and easily shareable with colleagues and collaborators.

Additionally, these containers support the use of GitHub and other version control systems, making it easier and smoother for teams to collaborate on code, track changes, and maintain a clear project history – enhancing collaboration and preserving the integrity of the codebase, which is essential for long-term project success.

Simplified Model Deployment

Deploying machine learning models in production is complex, often requiring multiple steps and a variety of tools. Hugging Face Deep Learning Containers simplify this process by providing a ready-to-use environment that is seamlessly integrated with Google Cloud's deployment services. Whether developers are looking to deploy models for real-time inference or set up a batch processing pipeline, these containers provide the tools and libraries needed to complete the job quickly and efficiently.

The container supports model deployment using Hugging Face's Model Hub, a repository of pre-trained models that can be easily fine-tuned and deployed for various tasks. This feature allows you to leverage the extensive library of models available in Model Hub, reducing the time and effort required to build and deploy machine learning solutions.

Conclusion

The introduction of Hugging Face Deep Learning Containers for Google Cloud marks a major advancement in the field of machine learning. These containers address many of the challenges developers and researchers face when working with complex machine learning workflows by providing a pre-configured, optimized, and scalable environment for deploying and training models. Integration with Google Cloud's robust infrastructure, performance enhancements, and collaboration capabilities makes them an invaluable tool for anyone looking to accelerate their machine learning projects and achieve better results in less time.

Check it out Repositories and containers. All credit for this research goes to the researchers of this project. Also, don't forget to follow us. Twitter And our Telegram Channel and LinkedIn GroupsUp. If you like our work, you will love our Newsletter..

Join us! 50k+ ML Subreddits

Here are some recommended webinars from our sponsors: “Unleash the Power of Snowflake Data with an LLM”

Asif Razzaq is the CEO of Marktechpost Media Inc. As a visionary entrepreneur and engineer, Asif is committed to harnessing the potential of artificial intelligence for social good. His latest endeavor is the launch of Marktechpost, an artificial intelligence media platform. The platform stands out for its in-depth coverage of machine learning and deep learning news in a manner that is technically accurate yet easily understandable to a wide audience. The platform enjoys over 2 million views every month, indicating its popularity among the audience.

🐝 Join the fastest growing AI research newsletter, read by researchers from Google + NVIDIA + Meta + Stanford + MIT + Microsoft & more…