Experiment 1: incentives improve accuracy and reduce bias

In experiment 1, we recruited a politically balanced sample of 462 US adults via the survey platform Prolific Academic55. Participants were shown 16 pre-tested news headlines with an accompanying picture and source (similar to how a news article preview would show up on someone’s Facebook feed). In a pre-test, eight headlines (four false and four true) were rated as more accurate by Democrats than Republicans, and eight headlines (four false and four true) were rated as more accurate by Republicans than Democrats56. An example of a Democrat-leaning true headline was ‘Facebook removes Trump ads with symbols once used by Nazis’ from apnews.com, and an example of a Democrat-leaning false news headline was ‘White House Chef Quits because Trump Has Only Eaten Fast Food For 6 Months’ from halfwaypost.com. After seeing each headline, participants were asked ‘To the best of your knowledge, is the claim in the above headline accurate?’ and were then asked ‘If you were to see the above article on social media, how likely would you be to share it?’ For more details, see Methods.

Half of the participants were randomly assigned to the ‘accuracy incentives’ condition. In this condition, participants were told they would receive a small bonus payment of up to one US dollar based on how many correct answers they could provide regarding the accuracy of the articles. The other half of participants were assigned to a ‘control’ condition in which they were asked the same questions about accuracy and sharing without any incentive to be accurate.

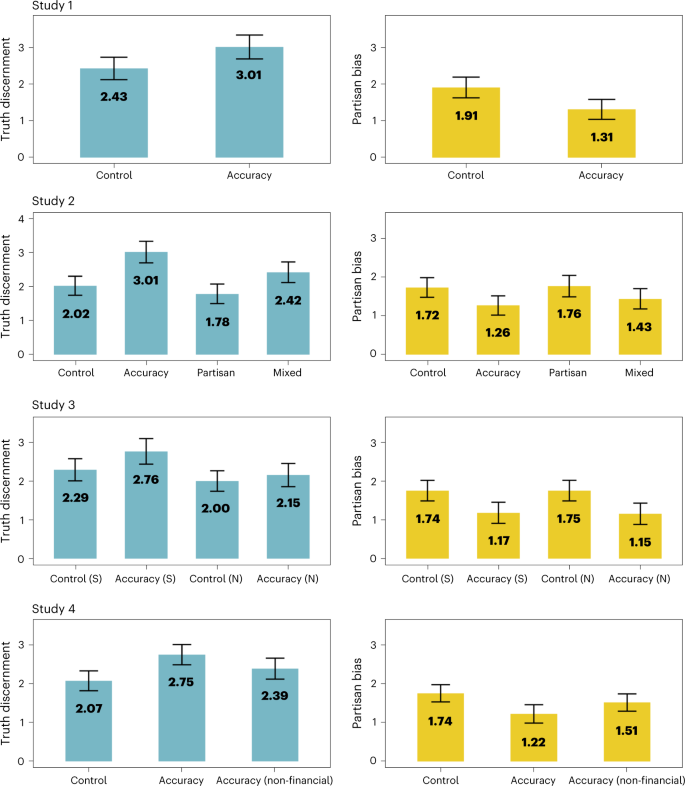

We first examined whether accuracy incentives improved truth discernment, or the number of true headlines participants rated as true minus the number of false headlines participants rated as true15. As predicted, participants in the accuracy incentives condition (mean (M) = 3.01, 95% confidence interval (CI) 2.68–3.34) were better at discerning truth than those in the control condition (M = 2.43, 95% CI 2.12–2.73), t(457.64) = 2.58, P = 0.010, d = 0.24. In other words, participants answered 11.01 (out of 16) questions correctly in the accuracy incentives condition, as opposed to 10.43 (out of 16) questions in the control condition.

We next examined whether incentives decreased partisan bias, or the number of politically congruent headlines participants rated as true minus the number of politically incongruent headlines participants rated as true. This measurement of partisan bias follows recommendations from prior work15,57, yet we discuss alternative ways to measure partisan bias and debates about the term ‘partisan bias’58 in Supplementary Appendix 1. We also re-analysed our data using an alternate measure of partisan bias in Supplementary Appendix 1 and found no changes to our main conclusions.

As predicted, partisan bias, or one’s belief in politically congruent over politically incongruent claims, was 31% smaller in the accuracy incentives condition (M = 1.31, 95% CI 1.04–1.58) as compared with the control condition (M = 1.91, 95% CI 1.62–2.19), t(495.8) = 3.01, P = 0.001, d = 0.28. Results from all four studies are plotted visually in Fig. 1.

In study 1 (n = 462), accuracy incentives improved truth discernment and decreased partisan bias in accuracy judgements. Study 2 (n = 998) replicated these findings, but also found that incentives to determine which articles would be liked by their political in-group if shared on social media decreased truth discernment, even when paired with the accuracy incentive (the ‘mixed’ condition). Study 3 (n = 921) further replicated these findings and examined how effect sizes differed with and without source cues (S, source; N, no source). Study 4 (n = 983) also replicated these findings and found that that a scalable, non-financial accuracy motivation intervention was also able to increase belief in politically incongruent true news with a smaller effect size. Means for each condition are shown in the figure, and error bars represent 95% confidence intervals. The y axis of graphs on the left represent truth discernment (or the number of true claims rated as true minus the number of false claims rated as false). The y axis of graphs on the right represent partisan bias (or the number of politically congruent claims rated as true minus the number of politically incongruent claims rated as true).

Additional analysis (for extended results, see Supplementary Appendix 1) found that the accuracy incentives condition increased the percentage of politically incongruent true headlines rated as true (M = 51.53%, 95% CI 47.36–55.70) as compared with the control condition (M = 38.25%, 95% CI 34.41–42.08), P < 0.001, d = 0.43. Incentives did not statistically significantly impact judgements of politically congruent true news, politically incongruent false news or politically congruent false news when controlling for multiple comparisons with Tukey post-hoc tests (ps >0.444). Thus, the effects of incentives were mainly driven by an increased belief in true news from the opposing party.

Finally, we examined whether the incentives influenced sharing discernment, or the number of true headlines shared minus the number of false headlines people intended to share. Interestingly, even though sharing higher-quality articles was not explicitly incentivized, sharing discernment was slightly higher in the accuracy incentive condition (M = 0.38, 95% CI 0.28–0.48) as compared with the control condition (M = 0.22, 95% CI 0.15–0.30), t(424.8) = 2.49, P = 0.037, d = 0.23.

Experiment 2: social motivations

In experiment 2, we aimed to replicate and extend on the results of experiment 1 by examining whether social or partisan motivations to correctly identify articles that would be liked by one’s political in-group might interfere with accuracy motives. We recruited another politically balanced sample of 998 US adults (Methods). In addition to the accuracy incentives and control condition, we added a ‘partisan sharing’ condition, whereby participants were given a financial incentive to correctly identify articles that would appeal to members of their own political party. This condition was meant to mirror the incentive structure of social media whereby people try to share content that will be liked by their friends and followers. Specifically, participants were told that they would receive a bonus payment of up to one dollar based on how accurately they identified articles that would be liked by members of their political party if they shared them on social media. Immediately after answering this question, participants were asked about the accuracy of the article and how likely they would be to share it. To examine how partisan identity goals might interfere with accuracy goals, we added a final condition, called the mixed motivation condition, in which participants received a financial incentive of up to one dollar to identify articles that would be liked by one’s in-group, followed by an additional financial incentive to accurately identify true and false articles.

We first examined how these motivations influenced truth discernment. Replicating the results of experiment 1, there was a significant main effect of the accuracy incentives condition on truth discernment, F(1, 994) = 29.14, P < 0.001, η2G = 0.03, a significant main effect of the partisan sharing manipulation on truth discernment, F(1, 994) = 7.53, P = 0.006, η2G = 0.01, but no significant interaction between the accuracy and the partisan sharing manipulation (P = 0.237). Tukey honestly significant difference (HSD) post-hoc tests indicated that truth discernment was higher in the accuracy incentives condition (M = 3.01, 95% CI 2.69–3.32) compared with the control condition (M = 2.02, 95% CI 1.74–3.30), P < 0.001, d = 0.41. Truth discernment was also higher in the accuracy incentives condition compared with the partisan sharing condition (M = 1.78, 95% CI 1.49–2.07), P < 0.001, d = 0.50, and the mixed condition (M = 2.42, 95% CI 2.11–2.71), P = 0.029, d = 0.27. However, the mixed condition did not differ from the control condition (P = 0.676), and the partisan sharing condition also did not significantly differ from the control condition (P = 0.241). Taken together, these results suggest that accuracy motivations increase truth discernment, but motivations to share articles that appeal to one’s political in-group can decrease truth discernment.

We then examined how these motives influenced partisan bias. Replicating the results from experiment 1, there was a significant main effect of accuracy incentives on partisan bias, F(1, 994) = 9.01, P = 0.003, η2G = 0.01, but no effect of the partisan sharing manipulation, F(1, 994) = 0.60, P = 0.441, η2G = 0.00, and no interaction between the accuracy and the partisan sharing manipulation, F(1, 994) = 0.27, P = 0.606, η2G = 0.00. Post-hoc tests indicated that there was a non-significant difference in partisan bias between the accuracy incentives condition (M = 1.26, 95% CI 1.01–1.51) and the control condition (M = 1.72, 95% CI 1.47–1.98), P = 0.062, d = 0.23, a 27% decrease in partisan bias. There was a significant difference between the accuracy incentives condition and the partisan sharing condition (M = 1.76, 95% CI 1.48–2.03), P = 0.040, d = 0.24. No other post-hoc tests yielded significant differences (ps >0.182).

Follow-up analysis (Supplementary Appendix 1) once again indicated that the incentives primarily impacted the percentage of politically incongruent true headlines rated as accurate (M = 55.61%, 95% CI 51.68–59.54) when compared with the control condition (M = 37.65%, 95% CI 33.83–41.46), P < 0.001, d = 0.58. The incentives again did not impact congruent true news, incongruent false news or congruent false news (ps >0.148).

There was no significant effect of accuracy incentives on sharing discernment (P = 0.996), diverging from the results of study 1. However, follow-up analysis (Supplementary Appendix 1) indicated that those in the partisan sharing condition shared more politically congruent news (either true or false) (M = 1.98, 95% CI 1.90–2.05) as compared with the control condition (M = 1.80, 95% CI 1.74–1.87), P = 0.015, d = 0.21. Additionally, those in the mixed condition (M = 2.02, 95% CI 1.94–2.10) shared more politically congruent news (true or false) as compared with the control condition, P < 0.001, d = 0.26. Thus, prompting participants to identify whether an article will be liked by their political allies—whether or not they are also incentivized to be accurate—appears to increase intentions to share both true and false news that appeals to one’s own partisan identity.

Experiment 3: accuracy incentives and source cues

In experiment 3, we sought to replicate our prior findings in a nationally representative sample in the United States. We recruited a sample of 921 US participants that was quota matched to the national distribution on age, gender, ethnicity and political party. We also tested a potential psychological process underlying the effects of accuracy incentives. As prior work has found strong effects of source cues17 on judgements of news headlines, we suspected that people were responding to source cues when making judgements about news. As true news often contains more recognizable sources with partisan connotations (for example, ‘nytimes.com’ as opposed to the fake news website ‘yournewswire.com’)59, this may explain why incentives only impacted judgements of true news in experiments 1 and 2. To test this possibility, we examined the effect of incentives with and without source cues (for example, a URL name such as ‘foxnews.com’) present beside the headlines (for more details, see Methods). Because we wanted to compare the effects of accuracy incentives with and without sources, this study had four conditions: accuracy incentives (with sources), control (with sources), accuracy incentives (without sources) and control (without sources).

Replicating the main results from experiments 1 and 2, the accuracy incentives condition significantly improved truth discernment, F(1, 917) = 4.44, P = 0.035, η2G = 0.01, reduced partisan bias, F(1, 917) = 18.21, P < 0.001, η2G = 0.02, and increased the number of politically incongruent true articles rated as accurate, F(1, 917) = 20.94, P < 0.001, η2G = 0.02. Thus, accuracy incentives appear to increase accuracy and reduce partisan bias in a large representative sample, suggesting that the results of these experiments probably generalize to the US population as a whole.

Although effect sizes appeared to be descriptively smaller when sources were removed from the headlines (for details, see Fig. 1 and Supplementary Appendix 1), we did not find significant interactions between the main outcome variables and the presence or absence of source cues. However, this study design did not provide strong power to test whether this was not due to chance, since interaction effects can require up to 16 times as much power as main effects60,61 (for power analysis, see Methods). Additional analysis using Bayes factors62 reported in Supplementary Appendix 1 did not find strong evidence for the absence of interaction effects. Like in experiment 2, there was once again no significant impact of accuracy incentives on sharing discernment (P = 0.906).

Experiment 4: the effect of a non-financial intervention

In experiment 4, we replicated the accuracy incentive and control condition in another politically balanced sample of 983 US adults, but also added a non-financial accuracy motivation condition. This non-financial accuracy motivation condition was designed to rule out multiple interpretations behind our earlier findings. One mundane interpretation is that participants are merely saying what they believe fact-checkers think is true, rather than answering in accordance with their true beliefs. However, this non-financial intervention does not incentivize people to answer in ways that do not align with their actual beliefs. Additionally, because financial incentives are more difficult to scale to real-world contexts, the non-financial accuracy motivation condition speaks to the generalizability of these results to other, more scalable ways of motivating accuracy.

In the non-financial accuracy condition, people read a brief text about how most people value accuracy and how people think sharing inaccurate content hurts their reputation63 (see intervention text in Supplementary Appendix 2). People were also told to be as accurate as possible and that they would receive feedback on how accurate they were at the end of the study.

Our main pre-registered hypothesis was that this non-financial accuracy motivation condition would increase belief in politically incongruent true news relative to the control condition. An analysis of variance (ANOVA) found a main effect of the experimental conditions on the amount of politically incongruent true news rated as true, F(2, 980) = 17.53, P < 0.001, η2G = 0.04. Supporting our main pre-registered hypothesis, the non-financial accuracy motivation condition increased the percentage of politically incongruent true news stories rated as true (M = 43.97, 95% CI 40.59–47.34) as compared with the control condition (M = 35.19, 95% CI 31.93–38.45), P < 0.001, d = 0.29. Replicating studies 1–3, the accuracy incentive condition also increased perceived accuracy of politically incongruent true news (M = 49.15, 95% CI 45.74–52.55), P < 0.001, d = 0.45. The accuracy incentive and non-financial accuracy motivation condition were not significantly different from one another (P = 0.083, d = 0.17), though this may be because we did not have enough power to detect a difference. In short, the non-financial accuracy motivation manipulation was also effective at increasing belief in politically incongruent true news, with an effect about 63% as large as the effect of the financial incentive.

Since we expected the non-financial accuracy motivation condition to have a smaller effect than the accuracy incentives condition, we did not pre-register hypotheses for truth discernment and partisan bias, as we did not anticipate having enough power to detect effects for these outcome variables. Indeed, the non-financial accuracy motivation condition did not significantly increase truth discernment (P = 0.221) or partisan bias (P = 0.309). However, replicating studies 1–3, accuracy incentives once again improved truth discernment (P = 0.001, d = 0.28) and reduced partisan bias (P = 0.003, d = 0.25). The effect of the non-financial accuracy motivation condition was 47% as large as the effect of the accuracy incentive for truth discernment and 45% as large for partisan bias. There was also no overall effect of the experimental conditions on sharing discernment (P = 0.689). For extended results, see Supplementary Appendix 1.

Together, these results suggest that a subtler (and also more scalable) accuracy motivation intervention that does not employ financial incentives is effective at increasing the perceived accuracy of true news from the opposing party, but has a smaller effect size than the stronger financial incentive intervention.

IDA

To generate more precise estimates of our effects, we pooled data from all four studies to conduct an IDA64. For the IDA, we used only the 16 news headlines that were used in all four studies, and included only the accuracy incentives and control conditions that were used in all four studies.

We did not have any studies in the file drawer on this topic, meaning that our estimate was not influenced by publication bias.

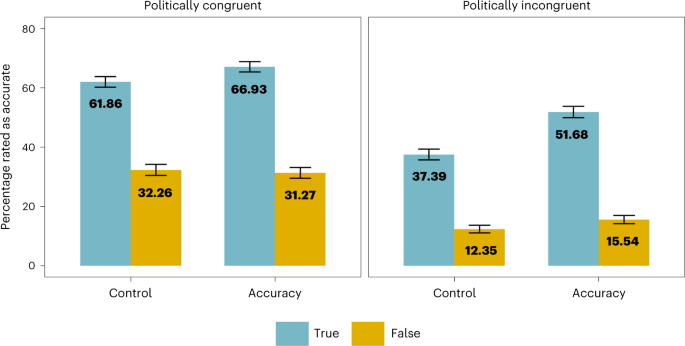

Incentives had the largest positive effect on the perceived accuracy of politically incongruent true news, P < .001, d = 0.47; and a smaller positive effect on the perceived accuracy of politically congruent true news, P = 0.001, d = 0.17. Incentives did not significantly affect belief in politically incongruent false news, P = 0.163, d = 0.13, or belief in politically congruent false news, P = 0.993, d = −0.04 (Fig. 2), after adjusting for multiple comparisons with Tukey post-hoc tests. Analysis for each individual item revealed that incentives significantly increased belief in all true items, but they did not significantly decrease belief in any false items (though they significantly increased belief in one false item). More details are reported in Supplementary Appendix 1, and an analysis for each individual headline is reported in Supplementary Appendix 3. Additional analysis using Bayes factors reported in Supplementary Appendix 4 found strong evidence that incentives impacted belief in both politically congruent and politically incongruent true news, but found inconsistent evidence that they affected belief in false news.

IDA results (with data from all four studies, n = 2,092) broken up by headline type. Incentives had the largest effect on belief in politically incongruent true news (d = 0.47), and a smaller effect on politically congruent true news (d = 0.17). Incentives did not have a significant effect on politically congruent or politically incongruent false news when controlling for multiple comparisons. Headline-level analysis revealed that incentives increased belief in all eight true items, but did not decrease belief in a single false item (for item-level analysis, see Supplementary Appendix 3). Means for each condition are shown in the figure, and error bars represent 95% confidence intervals.

While effects on sharing discernment were inconsistent across studies, the IDA found that there was a small positive effect of the incentive on sharing discernment, t(2020.20) = 2.19, P = 0.029, d = 0.10. Finally, people spent slightly more time on each headline in the accuracy incentives condition, t(818.53) = 2.34, P = 0.019, d = 0.16, indicating that incentives may have led people to put more effort into their responses.

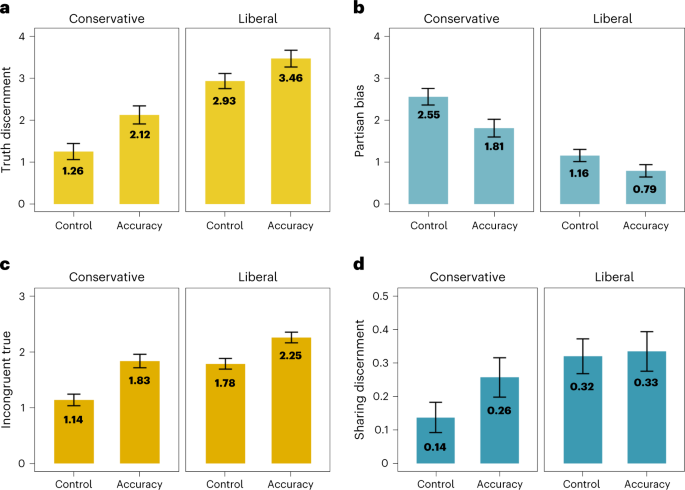

Replicating prior work26,27,28,29,30,31, conservatives were worse at discerning between true and false headlines than liberals. Conservatives answered about 9.26 (out of 16) questions correctly when not incentivized to be accurate, and liberals answered 10.93 questions out of 16 correctly when unincentivized—a 1.67-point difference, 95% CI 1.41–1.94, t(1035.69) = 12.53, P < 0.001, d = 0.77. However, when conservatives were incentivized to be accurate, they answered 10.12 questions correctly, making the gap between incentivized conservatives and unincentivized liberals 0.81 points, 95% CI 0.53–1.09, t(951.91) = 5.65, P < 0.001, d = 0.35. In other words, paying conservatives less than a dollar to correctly identify news headlines as true or false reduced the gap in performance between conservatives and (unincentivized) liberals by 51.50%. Incentives also considerably reduced the gap between conservatives and liberals in terms of partisan bias, sharing discernment and belief in politically incongruent true news. More detail is reported in Supplementary Appendix 1 and plotted visually in Fig. 3. Altogether, these results suggest that a substantial portion of US conservatives’ tendency to believe and share less accurate news reflects a lack of motivation to be accurate rather than lack of knowledge alone.

a, Conservatives were worse at truth discernment as compared with liberals. b–d, They also showed more partisan bias (b), less belief in politically incongruent true news (c) and worse sharing discernment (d). However, incentives consistently closed the gap between conservatives and (unincentivized) liberals for all of these outcome variables, suggesting that conservatives’ greater tendency to believe in and share (mis)information may in part reflect a lack of motivation to be accurate (instead of lack of knowledge or ability alone). The data shown are the pooled data across all four studies (n = 2,092). Means for conservatives and liberals are shown in the figures, and all error bars represent 95% confidence intervals. The y axis for each graph represents (a) truth discernment, (b) partisan bias, (c) belief in politically incongruent true news and (d) sharing discernment.

Importantly, the incentives improved truth discernment for both liberals, d = 0.23, P < 0.001, and conservatives, d = 0.40, P < 0.001 (for table of effect sizes broken down by political affiliation, see Supplementary Appendix 5). Descriptively, the effect sizes for our intervention were larger for conservatives than liberals, which diverges from other misinformation interventions that tend to show larger effect sizes for liberals65,66. Furthermore, political ideology (liberal versus conservative) was a significant moderator of belief in incongruent true news, P = 0.033, and partisan bias, P = 0.029 (though this moderation effects was not significant for truth discernment, P = 0.095, or sharing discernment, P = 0.061), such that the effects of incentives appeared to be larger for conservatives than liberals. The effect of the incentives on truth discernment was not significantly moderated by cognitive reflection, political knowledge or affective polarization (ps <0.182). However, even though we had a large sample, we were still slightly underpowered to detect these interaction effects (see power analysis in Methods), and supplemental Bayesian analyses also did not find strong evidence for the significant moderation effects (Supplementary Appendix 11), so these interaction effects should be interpreted with caution.

Relative importance of accuracy incentives

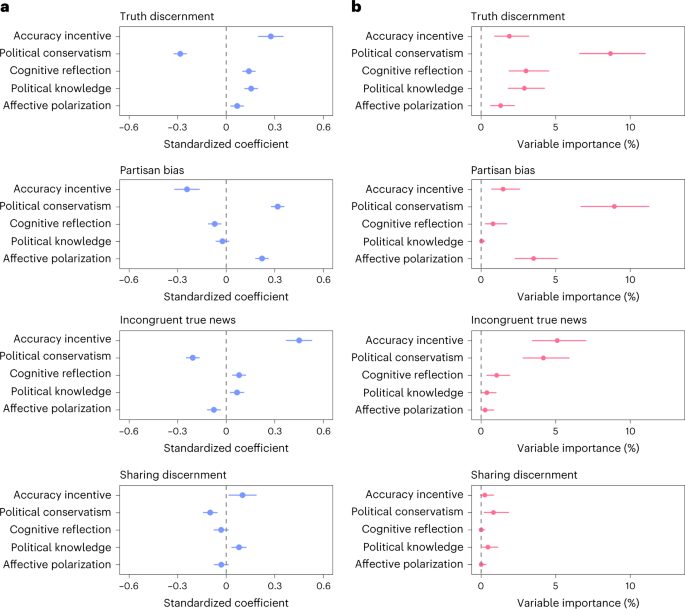

In each experiment, we measured other individual difference variables known to be predictive of truth discernment, such as cognitive reflection, political knowledge and partisan animosity, as well as demographic variables, such as age, education and gender. We ran a multiple regression analysis on our IDA with all of these variables included in the model (Fig. 4a). To compare the relative importance of each of these predictors, we also ran a relative importance analysis using the ‘lmg’ method67, which calculates the relative contribution of each predictor to the R2 (Fig. 4b). Full models and relative importance analyses are in Supplementary Appendix 6 and 7.

a, Multiple regression results for the main outcome variables: truth discernment, partisan bias, belief in incongruent true news, and sharing discernment. Standardized beta coefficients are plotted for ease of interpretation. b, Variable importance estimates (lmg values) with bootstrapped confidence intervals are shown to examine the estimated percentage contribution of each predictor to the R2. The data shown are the pooled data across all four studies (n = 2,092). All error bars represent 95% confidence intervals.

Political conservatism and accuracy incentives were among the most important predictors for many of the key outcome variables, although confidence intervals were large and overlapping for the relative importance analysis (Supplementary Appendix 4). While prominent accounts claim that partisanship and politically motivated cognition play a limited role in the belief and sharing of misinformation as compared with other factors (such as cognition reflection or inattention)10,68, our results indicate that motivation and partisan identity or ideology are very important factors. Our data point to the importance of broad theoretical accounts of (mis)information belief and sharing that integrate motivation and partisan identity with other variables2,10,11,24,69. Indeed, an investigation using cognitive modelling found that a broad model of misinformation belief that included multiple factors (such as partisan identity, cognitive reflection and more) performed better at predicting acceptance of misinformation than other models that focused exclusively on cognitive or emotional factors70.