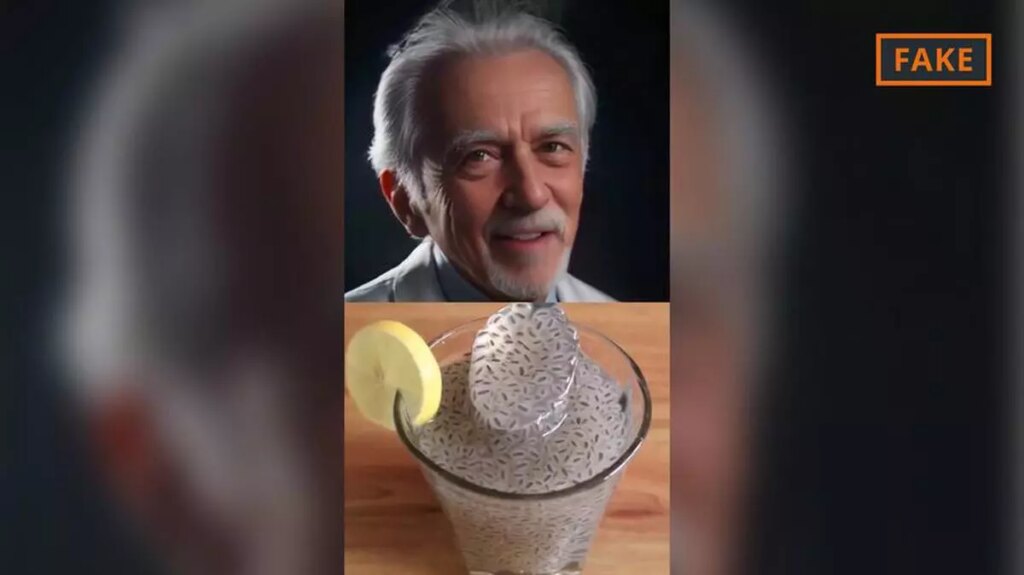

Videos posted on social media often feature what appear to be doctors wearing white coats and stethoscopes around their necks, offering advice on natural remedies and tips for whitening teeth. But in many cases, these are not real doctors, but bots generated by artificial intelligence (AI) who share medical advice with hundreds of thousands of followers. Not everything they say is true.

Can chia seeds help treat diabetes?

Claim: One of the AI-generated bots on Facebook claimed: “Chia seeds can help control diabetes.” The video received over 40,000 likes, was shared over 18,000 times, and generated over 2.1 million clicks.

Fact check: error.

Chia seeds are trending thanks to the many beneficial active substances they contain. Contains unsaturated fatty acids, dietary fiber, essential amino acids, and vitamins. A 2021 US report found that chia seeds have a positive impact on consumer health. Participants with type 2 diabetes and hypertension were found to have significantly lower blood pressure after consuming a certain amount of chia seeds over several weeks. Another study this year confirmed that chia seeds have anti-diabetic and anti-inflammatory properties.

Therefore, chia seeds may have health benefits for people suffering from diabetes. “But no one said anything about treatment,” says Andreas Fritsche, a diabetes specialist at Tübingen University Hospital. He explained that there is no scientific evidence that chia seeds can treat or completely control diabetes.

But the video not only spread disinformation, but also depicted a fake doctor. Social media is full of fake doctors like this who share information that appears to be health hacks. In some cases, artificially generated “doctors” may also share home remedies and beauty tips to whiten teeth or promote beard growth.

Many of these videos are written in Hindi even though their usernames have English titles. A 2021 Canadian study found that India has become a hotspot for misinformation on health issues during the COVID-19 pandemic.

According to the data, there was even more disinformation circulating about the pandemic in India than in countries such as the US, Brazil and Spain. The study argues that this may be due to India’s high internet penetration, increased social media consumption, and in some cases, users’ lower digital capabilities.

AI-generated doctors typically appear trustworthy, wearing white coats, stethoscopes around their necks, and scrubs. As Stephen Gilbert, professor of medical device regulatory science at the Dresden University of Technology, explained, AI impersonating a doctor can be very misleading.

His research areas include medical software based on artificial intelligence. “This is to convey the authority of doctors, who usually play an authoritative role in almost every society,” he told DW. This includes prescribing medications, diagnosing, and even making decisions about life and death. “These are cases of intentional misrepresentation for a specific purpose.”

According to Gilbert, these purposes may include the sale of certain products or services, such as future medical consultations.

Also read | Won 2023 Lasker Prize for predicting protein 3D structure using AI

Can home remedies cure brain diseases?

Claim: On Instagram, one artificially generated doctor claimed in a video: “Crushing 7 almonds, 10 grams of sugar candy and 10 grams of fennel and drinking it every night with hot milk for at least 40 days will cure any brain disease.” The account has over 200,000 followers and its videos have been viewed over 86,000 times.

Fact check: error.

This video claims to provide concrete steps to treat all brain diseases. However, as Frank Elbugus, chairman of the German Brain Foundation, has admitted, this claim is completely false.

He explained that there is no evidence that this recipe has any beneficial effects on brain diseases. Searching online for this particular combination of ingredients yielded no results. The German Brain Foundation advises people to seek immediate medical attention if they develop symptoms such as signs of paralysis or speech problems.

Like many other videos by similar accounts, this one features an AI-generated figure wearing a white coat. Gilbert explained that these videos are easy to recognize as fake because of the still images of people claiming to be doctors with minimal facial expressions and only their mouths moving, but he said the videos are easy to recognize as fake because of the static images of people purporting to be doctors with minimal facial expressions and only their mouths moving. It also warned of possible negative effects. future.

Also read | Can Nasscom’s ‘Responsible AI’ guidelines pave the way for ethical and safe deployment of artificial intelligence?

“An area of great concern where this is almost certainly moving is linking all of these processes together so that real-time responses are generated that are generated by the AI and linked to the voice AI that generates the response.” he said.

Beyond video manipulation, artificial intelligence poses risks in other ways, such as deepfakes in medical diagnosis. In 2019, researchers working on a study in Israel were able to generate CT scans containing false images, potentially adding tumors to or removing them from the images of the CT scan. It was shown that there is.

A 2021 study generated realistic and convincing electrocardiograms depicting fake heartbeats with similar results. Chatbots also pose another risk, as their answers can sound reasonable even if they are wrong. “People ask questions, [the bots] Looks like a lot of knowledge. “That’s a very dangerous scenario for patients,” Gilbert said.

But despite the risks, AI has played an increasingly important role in healthcare in recent years. Help doctors analyze her X-ray and ultrasound images and support diagnosis and treatment.

“In many countries, medical consultation is very expensive or not available at all,” Gilbert said. “Therefore, for many people in many countries, having access to information can be of great benefit.”

However, questions remain as to whether the information provided is still reliable and accurate. To confirm this, users searching for information about symptoms online can watch videos by certified operators or, if available, read scientific studies to collate the information.

Gilbert added that it’s a good idea to investigate which medical team is behind a particular website, app or account. If this is not clear, he concluded, it is best to remain skeptical and consider the source unreliable.