Initial results from that study show that the company’s platform plays a key role in funneling users to partisan information that they are more likely to agree with. However, the results cast doubt on the assumption that strategies that meta can use to stop its spread and engagement on social networks will have a significant impact on people’s political beliefs.

“Algorithms are extremely influential in shaping what people see on platforms and their experiences on platforms,” said Joshua, co-director and leader of the Center for Social Media Politics at New York University. Mr. Tucker says. He spoke about the research project in an interview.

“Despite the fact that we know that there is a big impact on people’s experiences on the platform, there is little impact on changes in people’s attitudes towards politics, or even in people’s self-reported political participation. .”

The first four studies, published Thursday in the journals Science and Nature, will study how social media influences political polarization and people’s understanding of and opinions about news, government and democracy. It is the result of a unique partnership between university researchers and Meta analysts. . Researchers, who relied on Meta for their data and ability to run experiments, analyzed these issues in the run-up to the 2020 election. Research is peer-reviewed before publication, a standard procedure in science in which papers are sent to other experts in the field to assess the merits of the research.

As part of the project, researchers changed the feeds of thousands of people who use Facebook and Instagram in the fall of 2020, exposing them to information different than what they normally receive, thereby changing their political beliefs. , to see if knowledge ,polarization can change. Researchers generally concluded that such changes have little effect.

The collaboration will present results from more than a dozen studies and will also examine data collected after the Jan. 6, 2021, attack on the U.S. Capitol, Tucker said.

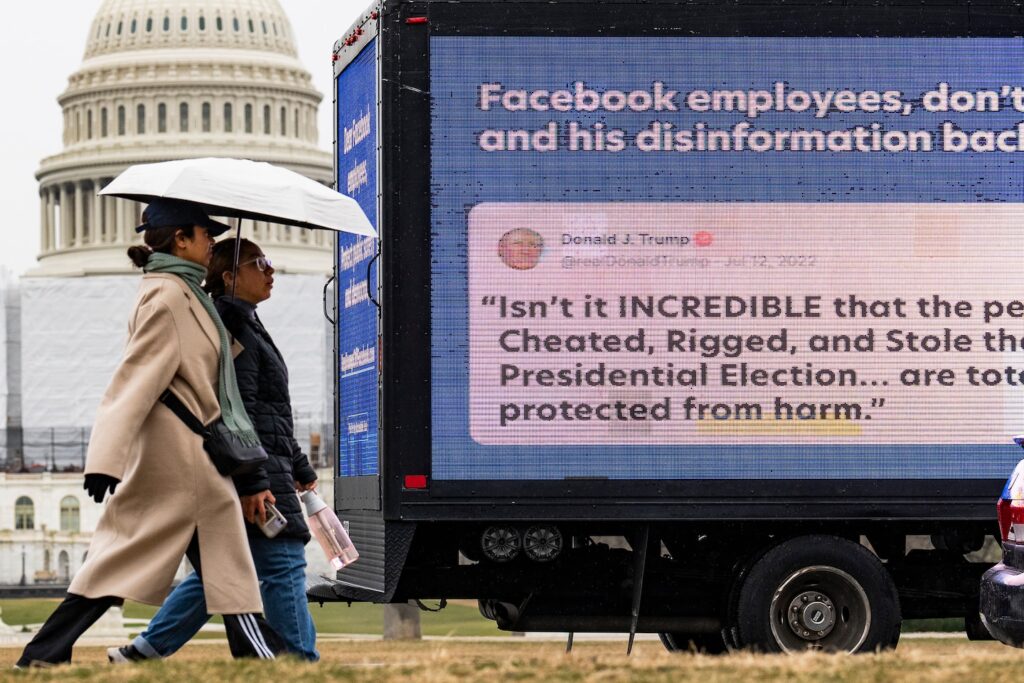

The study comes amid a years-long battle among advocates, lawmakers and industry leaders over how much effort tech companies should do to combat harmful, misleading and controversial content on social networks. It was done. The heated debate has prompted regulators to propose new rules for social media platforms that would require them to be more transparent about their algorithms and hold companies more accountable for the content they promote.

The findings are likely to confirm social media companies’ long-held claims that algorithms are not the cause of political polarization or chaos. Mehta said political polarization and support for civil society groups began to decline long before the rise of social media.

“The experimental results join a growing body of research showing that there is little evidence that the primary features of Meta’s platform alone cause harmful ’emotional’ polarization or meaningfully influence these outcomes. ,” Meta’s global president Nick Clegg said in a blog post on Thursday. About research.

But critics of the tech companies and some researchers who saw the findings before they were published say the results could lead to tech companies’ efforts to fuel divisiveness, political turmoil, or even user belief in conspiracies. It warns that it does not absolve the role played by the government. Other advocates say social media platforms shouldn’t be overshadowed by research to quell viral misinformation.

“Research supported by Meta, which appears fragmented with a narrow sample period, should not be an excuse to allow lies to spread,” said Nora Benavidez, senior advisor at Free Press, a digital civil rights group that has promoted Meta and others. said. Companies need to do more to combat election-related misinformation. “Social media platforms should step up their efforts ahead of the election, instead of concocting new schemes to avoid responsibility.”

“It’s a bit of a stretch to say that this shows that Facebook isn’t the big problem, or that social media platforms aren’t the problem,” said Michael W. Wagner, a professor in the School of Journalism and Mass Communication at the University of Wisconsin-Madison. said. He spent hundreds of hours attending meetings and interviewing scientists as an independent observer of this collaboration. “This is good scientific evidence that he’s not the only problem that can be easily solved.”

In one experiment, researchers studied the effects of switching between users’ feeds on Facebook and Instagram to display content chronologically, rather than using Meta’s algorithm to display content. Critics such as Facebook whistleblower Frances Haugen claim that Meta’s algorithm amplifies and rewards hateful, divisive, and false posts by elevating them to the top of users’ feeds. , claim that switching to a chronological feed will reduce content conflicts. Facebook offers users the ability to view a mostly chronological feed.

The researchers found that chronological timelines were decidedly less appealing. Users whose timelines have been changed spend significantly less time on the platform. These users also saw more political articles and content flagged as untrustworthy.

However, a survey given to users to measure political beliefs found that time-series feeds had little effect on levels of polarization, political knowledge, or offline political behavior. This finding is consistent with some of Meta’s own internal research, which shows that users receive higher quality feeds determined by the company’s algorithms than feeds that are simply governed by the time something is posted. The Washington Post reported that this suggests that the content may be viewed.

Haugen, a former Facebook product manager who disclosed thousands of internal Facebook documents to the Securities and Exchange Commission in 2021, criticized the timing of the experiment in an interview. She argued that by the time the researchers evaluated the time-series approach in fall 2020, thousands of users had already joined large groups, flooding their feeds with potentially problematic content. He noted that in the months leading up to the 2020 election, Meta had already put in place the most aggressive election protection measures to combat the most extremist posts. The company said it rescinded many of those measures after the election.

In another experiment, researchers tested the effectiveness of limiting the visibility of viral content in users’ feeds. Researchers found that when people couldn’t see what their friends were reposting on Facebook, those users saw far less political news. The study found that these users clicked on and interacted with less content and were less knowledgeable about news, but their levels of political polarization and political attitudes remained the same.

Taken together, the findings released Thursday highlight the complex and fractured state of social media, where liberal and conservative users view and interact with very different news sources. One study analyzed data from more than 200 million U.S. Facebook users and found that users consume news in ideologically segregated ways. The study found that while both liberal and conservative websites are shared by users, domains and URLs preferred by conservatives are more prevalent on Facebook. . The study also found that the majority of content rated false by third-party fact checkers was right-leaning.

In another experiment, researchers reduced people’s exposure to content they were likely to agree with and increased their exposure to information from ideologically opposing viewpoints. This is the kind of change that many think will broaden people’s horizons. But the intervention had no measurable impact on people’s political attitudes or beliefs about false claims, the study found.

New York University’s Tucker cautioned against reading too much into the research. “If you did a similar study at another time or in another country, when people were less attentive to politics or weren’t as flooded with information about politics from other sources, you might get different results. “It could have been,” he said.

This study took place in a world where the cat is already out of the bag in many ways. The three-month switch in how information is presented on social networks comes against a backdrop of years of changes in the way people share and find information.

“This study doesn’t tell us what the world would be like without social media over the past 10 to 15 years,” Tucker said.

Research collaborations between outside academics and technology companies have a checkered history. In the 2018 initiative Social Science One, academics also partnered with Facebook to study the role of social media in elections. But the project has been plagued by accusations from researchers that Meta’s promises to provide data either never materialized or were ultimately flawed.

In the current study, Mehta contacted Tucker and Talia Stroud, director of the Media Engagement Center at the Moody College of Communication at the University of Texas at Austin, in early 2020, according to a project analysis written by Wagner. The company reportedly approached him about leading a joint research project. , a University of Wisconsin journalism professor who observed the process. Stroud and Tucker selected her 15 other scholars to join the team from among her nearly 100 scholars who were affiliated with Social Science One.

Wagner said Mehta and the researchers agreed in advance on the research questions, hypotheses and research design. The academics received no compensation from Meta, but the data collection costs were covered by Meta.

Wagner said in an interview that disagreements arose among the researchers as the paper neared publication. This included that at one point, a meta-researcher threatened to refuse to name a paper because he felt the paper used language that described ideological racism. It is said that there was also News sources magnify the effects of social media platforms. “The disagreements were ultimately resolved after several meetings and memo sharing,” Wagner said.

Tucker said the researchers decided to remain in the paper because they had “academic questions” and agreed with the conclusions.

Wagner said his observations suggest the data and scientific process are sound, but said future collaborations would benefit from greater independence from Meta.

“Meta supports researcher independence, so external academics had control over study design, analysis, and writing. We have taken a number of steps to ensure that this is true,” Mehta said in a statement.

Gary King, a political scientist at Harvard University who helped launch the project, said the 2020 election research project should not be a one-time collaboration, allowing researchers to evaluate Meta’s algorithms. This is because there are other more subtle experiments, he said.

“Meta deserves great recognition if and only if these studies continue,” he says. “If that’s the end of it, I think we need regulation.”